A 5% MQL-to-SQL conversion rate (roughly 1 qualified lead per 20 generated) sits below industry averages but is not unusual. Demand Gen Report’s B2B Benchmark data puts average MQL-to-SQL rates between 13% and 27% depending on sector, with SaaS and professional services at the higher end and broad-market demand gen programs often falling below 10%. The gap between those averages and a 5% rate is almost always a structural problem: lead capture optimized for volume rather than fit, combined with nurturing that treats a first-touch content download identically to someone who has visited a pricing page three times.

Fixing a 5% rate is not primarily a messaging problem or a sales problem. It is a signal problem. The lead generation system is collecting noise (contacts who will never buy) and routing them to sales as if intent were uniform. Raising lead quality requires changing what signals trigger a handoff, not just adding qualification questions to a form.

Table of Contents

A 2023 study by Forrester found that only 0.75% of leads generated by B2B marketing teams ever close as revenue. The MQL-to-SQL conversion problem is well-documented, but the root cause is routinely misdiagnosed. Most teams treat a low MQL-to-SQL rate as evidence of bad targeting or weak copy. A few rounds of persona refinement later, volume has dropped but quality has not materially improved, because the underlying system still rewards completion of a form over demonstration of genuine purchase intent.

The 1-in-20 problem matters more than ever because sales capacity is finite and expensive. At an average fully-loaded BDR cost of $80,000-$120,000 annually, a team spending 80% of its outreach cycles on unqualified leads is burning $64,000-$96,000 per rep per year on contacts that will never close. That math gets worse as deal cycles lengthen and CAC pressure increases.

What has changed recently is the availability of behavioral intent data at the individual visitor level, which makes it possible to distinguish a genuine buyer from a researcher or competitor before a form is ever submitted. The gap between teams using this data and those still relying on form-fill and demographic scoring alone is widening fast.

This article diagnoses the structural causes of a low MQL-to-SQL rate, maps the four levers that actually move lead quality, and shows how intent-based qualification changes the economics of the entire system.

Turn website traffic into sales-ready leads

- Identify high-intent visitors automatically

- Qualify leads before they reach your sales team

- Convert traffic without adding friction or forms

The 5% signal rate: what the benchmarks actually say

The framing of “1 in 20” disguises important variation. Across B2B sectors, MQL-to-SQL rates range from roughly 8% at the low end (broad-market SaaS, high-volume inbound) to 30%+ in tight-ICP outbound programs. The figure most relevant to your situation depends on three variables: lead source, ACV, and how strictly you define an MQL.

By lead source, the variance is substantial. Organic search leads convert at roughly 14.6% MQL-to-SQL on average (Search Engine Journal, 2022 B2B benchmarks). Content syndication (the category responsible for most cheap lead gen programs) routinely delivers under 5%, with some programs reporting sub-2% SQL conversion. Paid social sits around 6-9% for most B2B categories. Webinar registrants convert at 10-15% when followed up within 24 hours, dropping to under 5% beyond 72 hours.

ACV creates a floor effect. Below $10,000 ACV, teams often collapse MQL and SQL distinctions entirely because the sales motion is low-touch and the qualification overhead exceeds the deal value. Above $50,000 ACV, a 5% MQL-to-SQL rate is a serious problem: at that price point, sales involvement is expensive enough that each unqualified lead represents real cost. The threshold where 5% becomes alarming rather than merely low is roughly $20,000 ACV with a human-touch sales motion.

The definition of MQL is frequently the problem itself. If your MQL definition is “any contact who downloaded a piece of content and matches one firmographic criterion,” a 5% SQL rate is the expected output. That definition was never measuring purchase intent; it was measuring content consumption. Lead qualification frameworks that anchor MQL status to demonstrated buying-stage behavior consistently show SQL rates two to three times higher than those anchored to demographic criteria alone.

What counts as a good conversion rate varies substantially by industry. The honest benchmark question is not “is 5% normal?” but “given your lead source mix and ACV, what rate should you expect, and what is the cost per SQL that results?”

Why the 5% rate happens: the signal dilution problem

The structural cause of poor MQL-to-SQL conversion is what can be called The Signal Dilution Problem: a lead gen system designed to maximize top-of-funnel volume inevitably pulls in a majority of contacts who are not in a buying cycle, then routes them identically to those who are.

Four mechanisms drive dilution:

- Form-fill as a proxy for intent. A contact filling out a gated content form demonstrates only that they found the content title interesting enough to exchange an email address for it. That is a weak signal, equivalent to someone picking up a brochure at a trade show booth. Yet most MQL scoring systems treat a form fill as a meaningful buying signal, especially when it is the only behavioral data point available. The result: researchers, students, competitors, and early-awareness contacts flood the MQL queue alongside genuine buyers. Leads who just want free information are structurally indistinguishable from real buyers inside a form-fill-only system.

- Volume incentives in demand gen programs. MQL volume is a common demand gen KPI, which creates a structural incentive to lower qualification thresholds. When paid lead generation programs are evaluated on CPL rather than SQL cost, the cheapest lead sources (typically content syndication and broad-match paid social) dominate spend. These channels produce the lowest MQL-to-SQL rates but the lowest reported CPL, making performance look better than it is until sales starts measuring conversion. Lowering CPA on paid channels requires improving the quality of what converts, not just what clicks.

- Nurturing that ignores buying stage. Most marketing automation sequences treat all leads in the same stage cohort identically: leads from the same month get the same email sequence regardless of how they actually behave. A contact who has visited a pricing page twice and read a case study is demonstrably further in their buying cycle than someone who downloaded a top-of-funnel checklist. Treating them identically delays or completely misses handoff timing for the high-intent contact, while routing the low-intent contact to sales too early. Effective lead nurturing strategies segment by behavioral stage, not by acquisition date.

- Firmographic scoring without behavioral weighting. Lead scoring models that weight company size, industry, and title heavily (while underweighting on-site behavior) systematically misclassify intent. A VP of Marketing at a 500-person SaaS company who visited your pricing page three times is more likely to buy than a CMO at a 1,000-person company who opened one email. Firmographic fit is necessary but not sufficient. Without behavioral intent data layered on top of demographic fit, scoring models produce false positives at scale.

The real cost: the sales math you’re not running

A 5% MQL-to-SQL rate in a system generating 200 MQLs per month produces 10 SQLs. If your average BDR or AE can meaningfully engage 50-60 contacts per month, the team is spending 190 outreach cycles per month (roughly three full working weeks) on contacts who will not convert to pipeline.

At $100,000 fully-loaded BDR cost, that’s $73,000 per year in labor chasing non-SQLs from this single program. Add the cost of marketing automation licenses, content production, and demand gen spend that generated those 200 MQLs, and the true cost per SQL becomes a very different number than CPL-based reporting suggests.

The more damaging effect is opportunity cost. BDRs working unqualified leads are not working the high-intent prospects who visited your pricing page last week but never filled out a form. That behavioral signal (present in your analytics data, but typically invisible to your qualification system) represents latent demand that your current process cannot capture.

This is the actual failure mode of a 5% signal rate: it is not just that you are wasting money on bad leads. It is that the system incentivizes ignoring good ones.

(content syndication + broad paid)

(intent signals + stage gating)

annual waste

annual waste

per BDR / year

freed / month

The four levers that move lead quality

Improving MQL-to-SQL rate requires adjusting one or more of four variables. The fastest gains almost always come from changing how intent is defined and measured, not from restricting the top of funnel.

- Lever 1: Redefine MQL criteria around behavioral signals, not demographics. Replace or heavily supplement demographic scoring with on-site behavioral signals: pages visited, time on site, pricing page engagement, return visit frequency, and content consumed by category. A contact who has visited four pages including pricing in two sessions scores higher than one who filled out a form after visiting a single blog post, regardless of title or company size. Predictive lead scoring models that weight recency, frequency, and topic specificity of engagement consistently outperform firmographic-only models.

- Lever 2: Separate nurture tracks by buying stage, not by persona. Persona-based nurturing solves the wrong problem. A VP of Marketing at an e-commerce company in awareness stage and one in decision stage do not need the same content; they need fundamentally different interactions. Rebuild nurture tracks around stage signals: awareness contacts get education, consideration contacts get comparison content and proof, decision contacts get friction removal (pricing clarity, ROI tools, easy demo booking). The decision of what to send, and when, should be driven by behavior, not by which persona segment the lead falls into.

- Lever 3: Introduce a qualification gate before SQL handoff. For deals above $15,000 ACV, a brief qualification interaction (either a structured form sequence or a conversational flow) between MQL and SQL stages improves SQL quality dramatically without reducing close rates. The gate should ask only what sales cannot determine from behavioral data: budget existence, decision timeline, and whether a specific problem is active. Balancing qualification depth against form completion rates is a genuine trade-off. The goal is to filter misfit contacts, not to create friction that disqualifies good ones. The right lead qualification tools reduce this friction while maintaining signal quality.

- Lever 4: Make intent visible before form submission. The structural advantage available to teams willing to invest in it: behavioral intent data captures genuine buying signals before any form fill occurs. A visitor who reads three solution pages, visits pricing twice, and returns three days later is demonstrating purchase intent through their navigation pattern alone. If your current process only registers them when they fill out a contact form, you are systematically late to every high-intent conversation. Intent data used correctly captures this pre-form signal and routes it to the right follow-up, either surfacing them to sales or triggering a personalized on-site experience designed to accelerate the conversion.

Where intent data changes the math

The conventional lead qualification model is reactive: a visitor acts (fills a form), marketing scores the action, sales responds. The problem is that the signal triggering the handoff (the form fill) captures only a fraction of buying intent, typically the most price-sensitive or research-heavy segment of the market. Buyers who know what they want often do not fill out forms; they compare, return, and eventually buy or do not buy based on whether the product earns their confidence on the website itself.

Intent data platforms close this gap by making navigation behavior visible as a scored signal, not just as a historical record in a session log. The distinction matters mechanically: a score attached to a named contact in your CRM is actionable. A session trail in GA4 is not, unless someone manually connects it to a contact record. The power of intent data in practice lies in this translation from passive log to active signal.

For B2B teams specifically, identifying which companies are visiting your website anonymously before any form fill changes the economics of outreach. A company-level visit signal from a target account (particularly when combined with page-level data showing pricing or solution page engagement) gives sales a warm outreach trigger that predates any inbound conversion. Leveraging intent data for ABM means the MQL-to-SQL problem partially dissolves when you are outbounding to accounts demonstrating active interest rather than cold-sequencing your ICP list.

The practical constraint: intent data is noisy at low traffic volumes. Company-level detection is meaningful when accounts visit multiple pages across multiple sessions. Single-page, single-session visits from non-target accounts generate false positives. The signal becomes reliable when you filter on visit depth, page specificity, and return behavior, not on any single session in isolation.

Common mistakes when trying to fix lead quality

- Raising the MQL threshold without changing the lead source mix. Tightening your MQL definition while keeping the same content syndication spend reduces volume but does not improve the underlying distribution of intent signals in your lead pool. The problem is upstream. Address lead source quality before adjusting scoring thresholds.

- Adding qualification questions without removing demographic scoring weight. Layering a BANT qualification form on top of a scoring model that already passes firmographic matches creates a two-gate system where either gate alone would pass the lead. The result is marginally better SQL quality but no fundamental change in how intent is measured. One gate, anchored to behavioral and stated intent, beats two weak gates.

- Blaming sales for low MQL-to-SQL rates. When sales converts fewer than 20% of MQLs to SQLs, marketing’s instinct is often to question whether sales is following up correctly. This is sometimes valid: speed-to-lead matters, and 30-minute response times convert at 21x the rate of 24-hour responses (MIT/Velocify, 2011). But it is rarely the primary driver. A 5% SQL rate means 95% of leads were not qualified buyers when they entered the pipeline. Sales effectiveness explains variance at the margin; it does not explain a structural 95% discard rate.

- Measuring lead quality by MQL volume, not SQL cost. If your demand gen program is reporting CPL and not cost-per-SQL, the quality problem is invisible in the metrics that drive budget decisions. Requiring SQL cost visibility is the single most effective way to force upstream accountability for lead quality. Whether CRO investment is worth it depends entirely on whether you’re measuring outcomes that reflect revenue, not just volume.

- Using last-touch attribution to evaluate lead gen channels. Content syndication often appears efficient on last-touch attribution because it generates the form fill. Behavioral intent data (which shows what the visitor did before and after the form fill) reveals whether that content engagement was the beginning of a buying journey or a dead end. First-touch and multi-touch attribution models surface lead source quality more accurately.

How Pathmonk improves lead quality at the conversion layer

Most approaches to the signal dilution problem intervene at the scoring layer, adjusting how leads are classified after they have already entered the system. Pathmonk addresses the problem one step earlier: at the point in the buyer journey where intent signals are generated, before a form is completed.

The mechanism starts with buying stage classification. Pathmonk’s AI reads behavioral signals (page sequence, scroll depth, time-on-page, session recency, return visit patterns) and classifies each visitor into one of three buying stages: awareness, consideration, or decision. This classification updates in real time as the visitor navigates. The stage detection logic means that a decision-stage visitor landing on a pricing page triggers a different microexperience than an awareness-stage visitor landing on the same page.

This matters for lead quality because the content shown is calibrated to buying readiness. A decision-stage visitor receives a direct offer to book a demo or start a trial, the path of least resistance to conversion. An awareness-stage visitor receives educational content designed to advance them toward consideration, rather than a premature conversion request they will ignore or abandon. The result: the leads who do convert via a demo request are disproportionately decision-stage buyers, not early-stage researchers who clicked a CTA before they were ready.

Qualification flows take this further. For B2B teams with complex products or segmented offerings, Pathmonk’s qualification flow feature creates an interactive on-site sequence that routes visitors to the most relevant outcome (a different booking page, a different content offer, or a direct sales contact) based on their responses. The flow serves a dual purpose: it reduces choice overload for the visitor and it pre-qualifies the lead before it ever reaches a CRM. A lead generated through a qualification flow arrives with routing context attached (company type, use case, urgency) that a plain form fill never provides.

For B2B specifically, the company detection capability changes the pre-form pipeline. Pathmonk’s B2B intent leads feature identifies which companies are visiting the website, at which buying stage, and on which pages, without requiring a form fill. A target account visiting your pricing and integrations pages three times in a week generates a visible intent signal that sales can act on immediately, rather than waiting for an inbound form that may never come. This is not a cookie-based tracking solution; it uses cookieless fingerprinting, which means it remains functional in privacy-first environments and does not require consent management. How website personalization drives higher sales is ultimately a function of how accurately the system reads and responds to these signals.

The outcome of these combined mechanisms: the leads that surface through Pathmonk’s conversion layer arrive with more behavioral context, at higher buying-stage readiness, than leads captured via standard form-fill flows. The SQL rate improves not because the qualification threshold was raised, but because the conversion system itself is designed to surface intent before a form is ever presented.

Increase +180% conversions from your website with AI

Get more conversions from your existing traffic by delivering personalized experiences in real time.

- Adapt your website to each visitor’s intent automatically

- Increase conversions without redesigns or dev work

- Turn anonymous traffic into revenue at scale

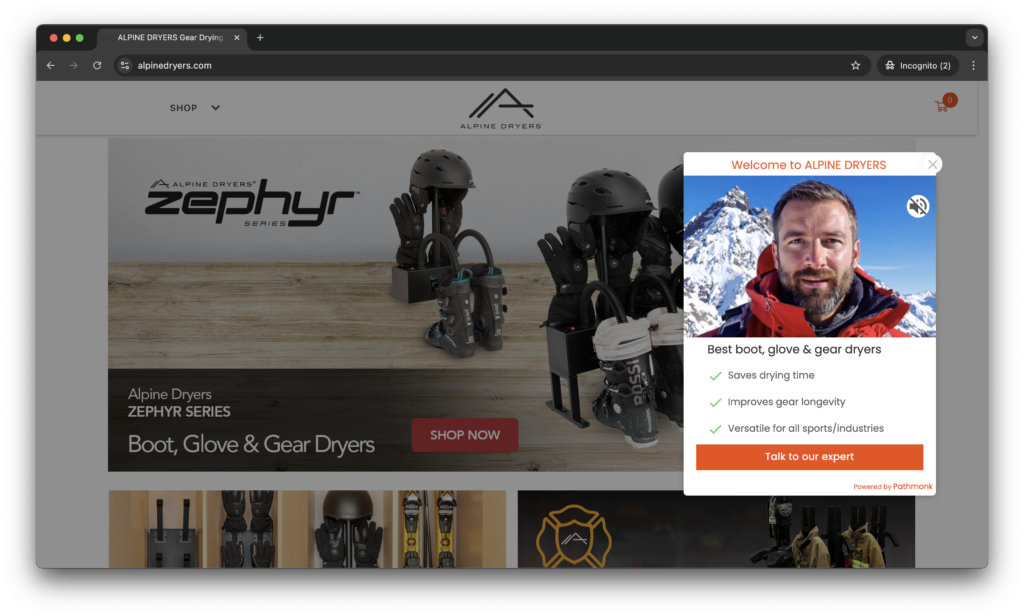

How Alpine Dryers increased high-ticket consultation leads by 113% by separating buyer intent at the website layer

Alpine Dryers sells gear dryers for ski resorts, commercial facilities, and individual winter sports buyers. The product is the same across customer types, the deal size is not. An individual buyer purchasing two units is a straightforward e-commerce transaction. A ski resort buying in bulk for a lodge renovation is a five-figure consultation sale that requires expert guidance before any purchase decision.

The problem was textbook signal dilution: both audiences were arriving at the same website and receiving an identical journey. High-value B2B and bulk buyers were landing on product pages, reviewing technical specifications, and leaving without ever being directed to the “Talk to an expert” path that their buying situation required. The website was structured for the lower-value audience by default, and the higher-value segment was left to self-identify and self-navigate. Most did not. The consultation form was generating a fraction of the submissions it should have, not because the traffic was wrong, but because the conversion pathway for high-intent buyers was invisible to them.

Pathmonk was implemented as a behavioral detection layer on top of the existing site, with no redesign required. The system read signals that indicated commercial rather than individual buying intent: repeated product page visits, extended time on technical specification sections, and navigation patterns consistent with bulk evaluation rather than single-unit browsing. When those patterns were detected, Pathmonk surfaced microexperiences designed specifically for that segment: educational content on commercial configurations, testimonials from resorts and facilities, and direct prompts to speak with an expert. Individual buyers continued through the standard e-commerce checkout path untouched.

The results arrived in under two months on existing traffic with no additional marketing spend. Conversion rate doubled from 0.14% to 0.29%, representing a +113% uplift in high-ticket consultation requests. The volume of visitors did not change. What changed was the system’s ability to identify which visitors were worth a sales conversation and route them accordingly before they stalled on a product page and left.

FAQs on how to fix low quality lead generation

What is a realistic MQL-to-SQL rate for B2B SaaS?

For inbound-heavy SaaS programs, a functional benchmark is 15-25% MQL-to-SQL. Programs that rely heavily on content syndication or low-intent paid channels often see 5-10%. Outbound-assisted programs with tight ICP definitions can reach 30-40%. If your rate is below 10% and your ACV is above $15,000, the lead gen system needs structural adjustment, not optimization.

Is the MQL-to-SQL rate the right metric to track lead quality?

It is one of two necessary metrics. MQL-to-SQL rate tells you how well marketing is qualifying before handoff. SQL-to-close rate tells you how well qualified those SQLs actually were. A program can show a high MQL-to-SQL rate by lowering the SQL threshold; the SQL-to-close rate catches this. Both need to be tracked together, ideally with cost-per-closed-deal as the unifying metric.

Can you improve lead quality without reducing lead volume?

Yes, but the mechanism matters. Adding behavioral intent signals to scoring (on-site page visits, engagement depth, return frequency) improves quality without volume trade-offs because it re-ranks existing leads rather than restricting new ones. Adding hard demographic gates (company size floors, title-only targeting) reduces volume first and may or may not improve quality depending on whether the volume being removed was actually low-intent.

Why do content downloads produce such poor SQL rates?

Content downloads signal information interest, not buying intent. Someone downloading a guide on “how to choose an enterprise CMS” is most likely in the awareness stage: they have a problem they are trying to understand, not a solution they are actively evaluating. Treating every download as an MQL conflates information consumption with purchase readiness. Content downloads are valuable for audience building and retargeting; they are weak MQL triggers on their own.

How does speed-to-lead affect MQL-to-SQL conversion?

Significantly. MIT research showed leads contacted within five minutes of form submission convert at 9x the rate of leads contacted after ten minutes. The effect is particularly strong for decision-stage leads who are actively evaluating; the window of engagement is short. Routing high-intent leads to immediate follow-up (versus batching them into next-day sequences) is one of the highest-return adjustments for SQL conversion rates.

Should you use a lead scoring model or a lead qualification form?

Both, at different stages. Scoring models are most effective for continuous prioritization: they rank your entire lead pool by likelihood to buy, which helps sales know where to focus. Qualification forms (or on-site qualification flows) are most effective as a final filter before SQL handoff; they capture stated intent and budget readiness that behavioral models cannot infer. Using only a scoring model risks promoting lookalike contacts who have not self-selected as buyers. Using only a form ignores the behavioral context that makes a form response meaningful.

What should you do if you do not know your ICP well enough to qualify leads accurately?

Start with behavioral signals rather than firmographic matching. Generating leads before your ICP is fully defined is possible when you use on-site behavior as a proxy: visitors who engage with product-specific pages, return multiple times, and reach pricing are self-selecting as potential buyers regardless of their firmographic profile. Use early sales conversations with these contacts to build ICP definition retroactively from observed buying patterns.

Does fixing lead quality require rebuilding the entire demand gen program?

No. The highest-leverage interventions are typically in the middle of the funnel, not at acquisition. Recalibrating MQL definition, adding behavioral signals to scoring, and introducing a qualification gate before SQL handoff can double SQL rates without touching paid media spend, content strategy, or channel mix. Start with what happens to leads after they enter the system before optimizing how they enter it.

What role does website personalization play in lead quality?

Website personalization affects lead quality by changing which visitors convert and at what buying stage. A generic website converts researchers and buyers at roughly the same rate, producing a mixed-intent lead pool. A website that presents different conversion experiences based on behavioral signals converts decision-stage visitors at a higher rate relative to early-stage visitors, improving the intent distribution of inbound leads without requiring any change to upstream demand gen. How AI-powered personalization works in practice depends on the system’s ability to detect and respond to stage in real time, not just segment by demographic.

Free template: CRO testing framework

Organize, prioritize, and execute conversion rate optimization tests with a clear, repeatable framework.

Free download

Key takeaways

- A 5% MQL-to-SQL rate is below industry average (13-27% for B2B) and indicates a structural problem in how intent is defined and captured, not a sales execution problem.

- The Signal Dilution Problem (lead gen systems optimized for volume rather than fit) is the root cause. Form-fill volume metrics, CPL optimization, and demographic-only scoring all systematically produce low-intent lead pools.

- The real cost of a 5% rate is not just wasted marketing spend; it is the sales capacity burned on unqualified follow-up and the high-intent visitors who never convert because the system only sees form fills.

- Behavioral intent signals (page-level engagement, visit recency, return frequency, buying stage classification) consistently outperform demographic scoring alone when reweighted into MQL criteria.

- Raising the MQL threshold without changing lead sources does not fix the problem; it reduces volume without improving the underlying signal distribution.

- Qualification flows that route and pre-qualify leads before CRM entry produce higher-quality SQLs than form-only capture because they attach stated intent to behavioral context.

- For B2B teams, company-level website detection creates an outbound trigger from target accounts demonstrating active intent, pre-empting the wait for inbound form fills that may never come.

- The correct measurement framework pairs MQL-to-SQL rate with SQL-to-close rate and cost-per-closed-deal. Any single metric in isolation is gameable and misleading.