Desktop and mobile are not the same conversion environment. They differ in input modality, session context, page rendering, intent signals, and user behavior patterns. A site that converts at 4% on desktop and 0.9% on mobile is not underperforming on mobile by accident. It is reflecting a product, UX, and measurement architecture that was built for one environment and applied to another.

Most companies diagnose this as a design problem and fix button sizes. The actual gap is usually a combination of intent mismatch, friction stacking, form factor incompatibility, page speed variance, and tracking inconsistency. Solving it requires separating the diagnostic layers: behavioral, technical, and measurement. Each has a different fix, and conflating them produces patch solutions that do not move the needle.

Table of Contents

The desktop-mobile conversion gap has existed since mobile traffic crossed 50% of web visits. What has changed is the cost of ignoring it. Mobile now represents 55-65% of traffic for most B2C properties and 30-50% for B2B, depending on industry and channel mix. The economic consequence of a 3x conversion gap at that traffic volume is significant enough to dwarf most CRO initiatives targeting desktop.

What most marketers misunderstand is that mobile is not a smaller version of the desktop journey. It is a structurally different channel with different user intent, different cognitive load, different form factor constraints, and different technical behavior. Applying desktop CRO logic to mobile, which is what most A/B testing programs implicitly do, produces marginal gains because it optimizes the wrong variables.

The other common misunderstanding is measurement. Many analytics setups track desktop and mobile in the same funnel view. This masks the gap, misattributes conversion events, and produces blended metrics that look acceptable while hiding a significant revenue leak. Before you can fix the conversion gap, you need to see it accurately.

This article covers:

- How to accurately diagnose where in the funnel mobile drops off versus desktop

- The behavioral, technical, and UX factors that drive the gap

- How to build a mobile-specific conversion architecture

- Where tracking breaks on mobile and how to compensate

- How Pathmonk addresses the personalization and intent layer for mobile visitors

- A realistic case study with numbers

Why mobile conversion rates are structurally lower

Intent and session context differences

Desktop sessions typically occur in intentional, task-oriented contexts: a user sitting at a workstation, actively researching a purchase, comparing options, ready to fill out a form. Mobile sessions are fragmented. They happen on commutes, during breaks, in multi-tasking contexts. The same user visiting your site on mobile and desktop may be at completely different stages of their decision process.

This means mobile visitors as a cohort have lower purchase intent on average, not because mobile is a worse channel, but because the distribution of intent is different. High-intent mobile users do convert, but they represent a smaller share of the mobile audience. The aggregate conversion rate reflects that distribution, not just UX quality.

The implication: if you serve the same content, CTA, and offer to all mobile visitors, you are showing a purchase-ready message to a largely browse-mode audience. This is a message-to-intent mismatch, and it depresses conversion rates regardless of how well the page is designed. Understanding what visitors do when they aren’t ready to convert is a prerequisite for building a mobile funnel that actually works.

Friction stacking on mobile

Desktop UX friction and mobile UX friction are not equivalent. A three-field form on desktop takes 15 seconds. The same form on mobile, with keyboard switches, autocorrect interference, and small tap targets, takes 60-90 seconds and often results in abandonment. Each additional form field has a higher friction cost on mobile than on desktop.

Common friction sources that are underweighted in mobile analysis:

- Form field count and type: Email fields trigger one keyboard, number fields another. Switching adds friction. Phone number fields with country selectors are particularly problematic.

- CTA placement: Above-fold CTAs on desktop become below-fold on mobile if the hero section is not adapted. Users who do not scroll miss the conversion trigger entirely.

- Modals and pop-ups: Exit-intent overlays that work on desktop do not translate to mobile. Scroll-triggered pop-ups often fire at the wrong position. Interstitials that cover the full screen on mobile are penalized by Google and abandoned by users.

- Page weight and load time: A 4-second load on desktop at 100 Mbps becomes an 8-12 second load on a 4G connection with variable signal. Google’s data shows that 53% of mobile sessions are abandoned if load exceeds 3 seconds.

- Checkout and payment flow: Multi-step checkout without mobile wallet options (Apple Pay, Google Pay) introduces card detail entry, which is a major abandonment point.

Get your website’s conversion score in minutes

- Instant CRO performance score

- Friction and intent issues detected automatically

- Free report with clear next steps

Technical rendering and tracking gaps

Mobile browsers handle JavaScript differently. Scroll depth tracking, click events, and session recording tools often miss events on mobile because of:

- Touch event vs. click event discrepancies: Some tracking implementations fire on click but not on touchstart or touchend, leading to undercounting of mobile interactions.

- Cookie and storage restrictions: Safari’s Intelligent Tracking Prevention (ITP) restricts first-party cookies to 7 days (1 day for script-set cookies). This breaks attribution windows and inflates mobile “new visitor” counts.

- Session fragmentation: Mobile users frequently switch apps mid-session. This causes session timeouts in Google Analytics (default: 30 minutes), creating artificial multi-session journeys that break funnel analysis.

- Cross-device journeys: A user who researches on mobile and converts on desktop appears as two separate users in most analytics setups. Desktop gets the conversion credit. Mobile shows no conversion, even though it was a key touchpoint.

This last point is critical. A portion of what looks like “mobile non-conversion” is actually mobile-initiated, desktop-completed journeys. Without cross-device stitching, you are measuring the wrong thing. The B2C customer journey typically spans multiple touchpoints before purchase, and mobile is frequently one of the first.

Diagnostic framework: isolating the gap

Before running any optimization, you need to segment the problem accurately. The gap between desktop and mobile conversion rates is rarely caused by a single factor. It is usually a combination of intent, UX, technical, and measurement issues in different proportions depending on your product and audience. If you haven’t already run a structured CRO audit, that is the right starting point before attempting device-level fixes.

Step 1: Separate the funnel by device

Pull your funnel data segmented by device type. Do not use blended views. Measure:

- Landing page bounce rate: Desktop vs. mobile. A large gap here points to page speed, above-fold rendering, or message mismatch.

- Scroll depth: If mobile users are not reaching the CTA, that is a layout problem. If they are reaching it and not clicking, that is a friction or trust problem.

- Micro-conversion rates: Newsletter signups, free trial starts, calculator usage, video plays. These give you a gradient of where intent begins to drop.

- Step-by-step funnel dropoff: Identify the specific step where mobile diverges from desktop. Is it the landing page? The product page? The checkout? Each has a different fix.

Step 2: Segment by traffic source within mobile

Mobile traffic is not homogeneous. Paid social mobile traffic (TikTok, Instagram) behaves very differently from organic search mobile traffic or direct mobile traffic. Paid social audiences are often in passive scroll mode and have minimal purchase intent. Organic search visitors on mobile arrived with a query, which implies more intent.

Run the funnel analysis for each traffic source separately. You will likely find that the mobile conversion problem is concentrated in specific sources, not evenly distributed. This focuses your optimization effort significantly. If the gap is concentrated in paid traffic, the question of whether your conversion rate is low because of traffic quality or your website becomes the more important diagnostic.

Step 3: Quantify the cross-device attribution gap

If you have a CRM or email capture in the funnel, check what percentage of desktop converters have a prior mobile session in the same cookie window. In GA4, use the path exploration report with device as a dimension. In Mixpanel or Amplitude, use user-level session data.

If 20-30% of your desktop conversions have a prior mobile touchpoint, you have a significant measurement problem layered on top of a UX problem, and solving UX without fixing measurement will still produce misleading results. Review how the GA4 attribution model handles cross-device credit before drawing conclusions from funnel reports.

Mobile-specific conversion architecture

Adapting the offer structure for mobile intent

The most impactful change most companies can make is not UX. It is offering the right micro-conversion to mobile visitors instead of pushing the primary conversion. A mobile visitor in browse mode will not complete a purchase or fill out a 12-field enterprise lead form. They might save a product, subscribe to an email, use a calculator, or click to call.

Designing mobile-specific conversion paths:

- Identify the highest-value micro-conversion your product supports: For e-commerce, this might be a wishlist add. For SaaS, a free tool or email capture. For B2B, a “schedule a call” CTA rather than a full demo request form.

- Serve device-specific CTAs: The same page can show “Start free trial” on desktop and “Get a personalized demo” on mobile, based on device detection. These are different friction levels appropriate to different contexts.

- Use SMS or email capture as the bridge: Mobile visitors who are not ready to convert can enter a re-engagement flow. Capturing their email or phone number on mobile creates a continuation path that can close on desktop. A structured lead nurturing strategy is what turns that mobile email capture into an eventual conversion.

Page speed as a conversion variable

Page speed on mobile is not a technical metric. It is a conversion metric. The correlation between Time to First Byte (TTFB), Largest Contentful Paint (LCP), and conversion rate on mobile is measurable and consistent across industries.

Benchmarks worth tracking:

Metric | Target for mobile | Common underperforming range |

LCP | Under 2.5 seconds | 4-8 seconds |

First Input Delay | Under 100ms | 200-400ms |

Cumulative Layout Shift | Under 0.1 | 0.2-0.5 |

Page weight | Under 1.5 MB | 3-6 MB |

If your mobile conversion gap correlates with pages that have poor Core Web Vitals scores, page speed is likely a primary driver, not a secondary one.

Form optimization for mobile

Form design for mobile requires different defaults than desktop:

- Reduce fields to the minimum necessary: Every field removed from a mobile form reduces friction disproportionately compared to desktop. For lead gen, first name and email outperforms first name, last name, email, phone, and company. Review the anatomy of a lead generation landing page to understand which elements are load-bearing for conversion.

- Use input type attributes correctly: type=”email” triggers the email keyboard. type=”tel” triggers the number pad. type=”number” with inputmode=”decimal” is better for currency inputs.

- Autofill and autocomplete: Use autocomplete attributes on all relevant fields. Name, email, address, and card fields should all have correct autocomplete values to leverage browser autofill.

- Single-column layouts: Two-column form layouts on desktop become cramped and misaligned on mobile.

- Sticky CTAs: A fixed bottom bar with the primary CTA ensures it is always accessible without scrolling.

Trust signals adapted for mobile

Trust signals that work on desktop (logos, review snippets, security badges, guarantee text) often render below the fold on mobile or in a format that does not communicate trust clearly on a small screen. Run a mobile-specific audit of where trust signals appear in the mobile layout and whether they are visible at decision points. Leveraging social proof effectively requires it to appear at the moment the user is deciding, not buried below the fold.

Where tracking breaks on mobile and how to compensate

ITP and cookie restrictions

Safari on iOS applies ITP aggressively. Script-set first-party cookies expire in 24 hours. This means users returning to your site after 24 hours appear as new visitors. Your returning visitor conversion rate on mobile Safari is artificially depressed and your new visitor count is inflated. This affects:

- Email click attribution (clicks that happened 2 days ago are lost)

- Retargeting audience building

- Returning visitor segment analysis

Compensation approaches:

- Use server-side tagging to set cookies via HTTP headers rather than JavaScript. These cookies are treated as first-party and persist for the duration you set.

- Implement a login or email capture as early as possible in the mobile journey to create a persistent identifier that survives cookie resets.

- Use UTM parameter persistence via URL hash or localStorage as a fallback for attribution within a session.

The broader challenge of navigating conversion attribution in a cookieless environment applies directly here: ITP is, in practice, a partial cookieless environment already enforced on iOS Safari.

Session fragmentation

Set your GA4 session timeout to match actual user behavior. For most mobile-heavy sites, the default 30-minute timeout is too short. Users who switch to another app and return within an hour should not be counted as new sessions. Extending to 60 minutes reduces artificial session inflation and gives you more accurate per-session conversion rates.

Cross-device measurement with GA4

GA4’s User-ID feature allows you to stitch sessions across devices for logged-in users. If your funnel has any login step, implement User-ID to get accurate cross-device funnel data. Without it, you are measuring sessions, not users, and the gap between desktop and mobile conversion rates is partially a measurement artifact. The B2B buying journey in particular routinely spans 5 or more touchpoints across devices, meaning single-device funnel analysis will systematically misattribute contribution.

How Pathmonk increases conversion rates on both desktop and mobile

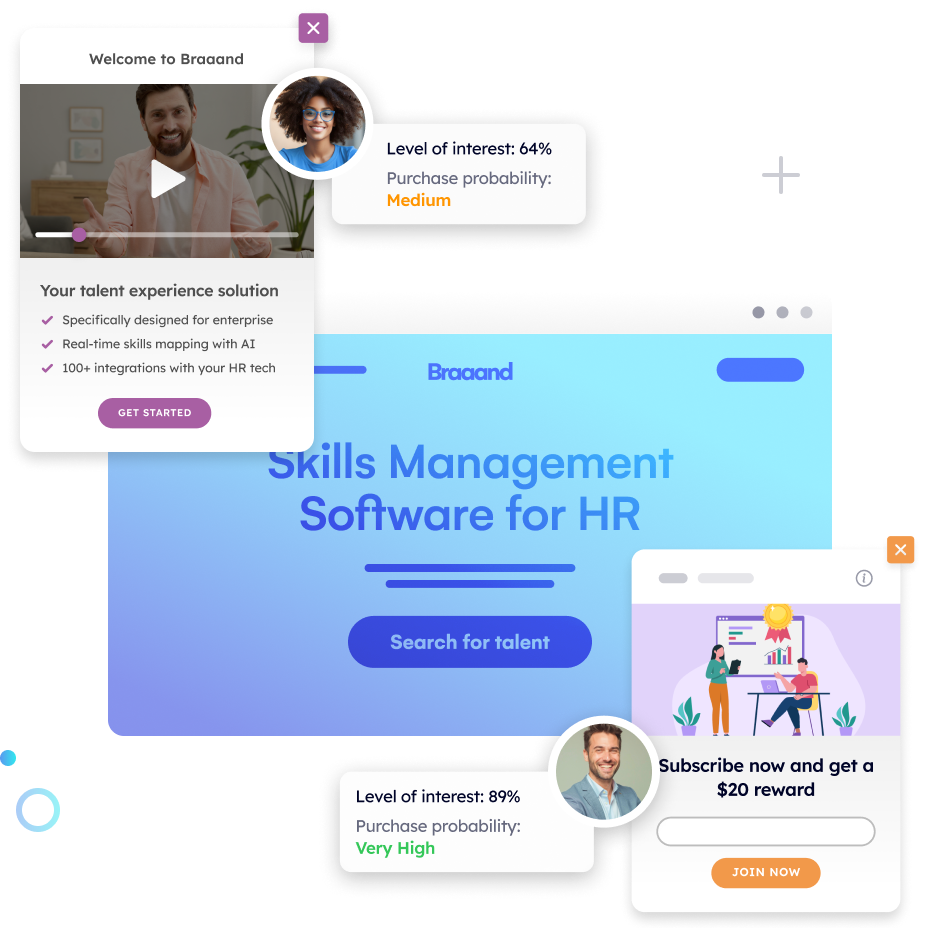

Pathmonk’s AI models where each visitor sits in their buying journey in real time, using behavioral signals collected within the session: scroll depth, time on page, traffic source, device type, and prior visit history. Based on that score, it layers a different experience onto the existing page — a different CTA, a lower-friction micro-conversion offer, or trust signals surfaced at the right moment — without requiring separate landing pages for each segment.

On desktop, where intent is more evenly distributed, this means high-intent returning visitors get the primary CTA with friction minimized, while first-visit organic visitors get a mid-funnel offer that matches their actual readiness. On mobile, where the majority of visitors, particularly from paid social, are in awareness or early consideration stage, Pathmonk shows them more educational content. The goal is not to close them on the first mobile visit but to get them into a sequence that closes later, often on desktop. Visibility settings can be adjusted by device so mobile and desktop experiences are configured independently.

A practical side effect of this approach is attribution. When a mobile visitor submits an email capture and later converts on desktop through that sequence, the mobile touchpoint becomes traceable. Without it, that visit produces no conversion event and receives no credit. Pathmonk’s fingerprint-based identification reduces dependency on cookies, which means it is less exposed to the Safari ITP resets described earlier in this article. The sessions stay connected even when JS-set cookies have expired.

Performance is measured through a built-in 50/50 A/B test that runs continuously: half of traffic sees Pathmonk-personalized experiences, half sees the original page. Uplift is calculated against that control group, not against month-over-month comparisons that conflate seasonality and traffic mix changes. You can filter results by device to see desktop and mobile uplift independently, which is where the mobile gains from intent-matched micro-conversions become visible as a separate metric rather than buried in a blended rate.

FAQ on desktop and mobile conversion rates

My mobile traffic is mostly from paid social. Is the lower conversion rate just a traffic quality issue?

Partially, but not entirely. Paid social mobile traffic does have lower average purchase intent than branded search or direct traffic. However, within that cohort, users who scroll past 50% of a page have shown engagement that should convert at a higher rate than it typically does. If your scroll-depth-adjusted conversion rate is still low on paid social mobile, the problem is UX and offer fit, not just traffic quality. Segment your analysis by scroll depth before concluding it is purely an audience issue.

Should I build separate mobile landing pages or use responsive design with device detection?

Separate pages give you more control over layout, content prioritization, and form structure, but they create a maintenance overhead and can cause canonical/indexing issues if not set up correctly. Responsive design with server-side device detection for dynamic content swaps is a more sustainable architecture for most teams. The key variables to swap are: CTA copy and destination, form field count, trust signal placement, and hero image/video weight. Review the attributes of a good landing page experience as a baseline checklist for both variants.

How do I know if my mobile conversion gap is a measurement problem versus a real UX problem?

Run a cohort analysis of users who converted on desktop and check how many had prior mobile sessions in the same attribution window. If more than 15-20% of desktop converters have prior mobile sessions, you have a measurement problem contributing to the gap. Fix attribution first before investing heavily in mobile UX changes, or you will optimize based on incomplete data.

Does Google’s mobile-first indexing affect conversion rate, or just rankings?

Mobile-first indexing affects which version of your page Google evaluates for rankings, but it indirectly affects conversion because pages optimized for mobile-first indexing tend to have better Core Web Vitals, cleaner content hierarchy, and faster load times. These are the same factors that drive mobile conversion. Treating mobile as the primary development target rather than a responsive afterthought produces better conversion outcomes.

At what traffic volume does it make sense to run mobile-specific A/B tests?

To reach 95% confidence on a mobile-only test with a 10% relative lift and a 1% baseline conversion rate, you need approximately 40,000 mobile visitors per variant. For most sites, this means mobile-specific testing is only viable for top-of-funnel pages (homepage, main landing pages) or high-traffic e-commerce category pages. For lower-traffic pages, use qualitative methods (session recording, user testing) to inform mobile changes rather than A/B testing. CRO for low-traffic sites requires a different approach, and mobile sub-segments are typically too small for reliable A/B results.

How does Safari ITP specifically affect my mobile conversion funnel reporting?

Safari ITP causes script-set first-party cookies to expire in 24 hours (or 7 days for cookies that pass ITP’s heuristics). This means returning visitors on iOS Safari who return after 24 hours appear as new visitors. Your returning visitor conversion rate is understated and your new visitor count is overstated on mobile. This does not affect your actual conversion rate, but it affects every segmentation analysis that uses visit number as a variable, including retargeting audience quality scores and email click attribution.

My mobile users are converting at a lower AOV as well as a lower rate. What does that indicate?

Lower AOV on mobile suggests that even when users do convert, they are choosing lower-commitment options (smaller packages, shorter subscriptions, fewer items). This is consistent with a mobile intent mismatch: they are converting before they are fully convinced. The fix is to ensure that your mobile product pages carry sufficient information to support confident decisions, not just to reduce friction. Higher friction reduction without higher confidence-building can increase conversion rate while decreasing AOV, which may not improve revenue.

Can progressive web apps (PWAs) close the mobile conversion gap?

PWAs improve load performance, enable push notifications, and create an app-like session experience that can reduce abandonment. They are most effective when mobile sessions are recurring and engagement-oriented (media, tools, frequent-use products). For single-session or low-frequency purchase journeys, the implementation cost of a PWA typically outweighs the conversion gain compared to page speed optimization and intent-matched CTAs.

Key takeaways

- Desktop and mobile conversion gaps are multi-layered: Intent distribution, UX friction, technical rendering, and measurement gaps all contribute. Treating it as a single problem produces incomplete solutions.

- A significant share of the gap is often a measurement artifact: Cross-device journeys, ITP-driven session fragmentation, and touch event undercounting all suppress reported mobile conversion rates without reflecting actual user failure.

- Intent mismatch is the highest-leverage fix: Serving the wrong conversion ask (a 12-field form to a browse-mode mobile visitor) produces low conversion rates regardless of UX quality. Matching the conversion ask to inferred intent stage produces disproportionate gains.

- Page speed is a conversion variable on mobile, not a technical metric: LCP above 3 seconds directly correlates with increased bounce and reduced micro-conversion engagement. It is a prerequisite fix, not an optional improvement.

- Mobile-specific form optimization has higher ROI than most design changes: Reducing field count, using correct input types, and enabling autofill produce measurable improvements with low development cost.

- Paid social mobile traffic requires different treatment: This cohort has low average intent. Measuring its conversion rate against the same benchmark as organic or direct traffic produces misleading conclusions.

- Safari ITP requires server-side tagging to maintain attribution accuracy: Without it, returning visitor analysis and email click attribution on iOS are structurally inaccurate.

- Pathmonk’s intent layer addresses the message-to-visitor mismatch dynamically, reducing the need to build and maintain separate mobile landing pages for each intent cohort.

- Fixing mobile conversion requires sequenced changes: Speed first, then tracking correction, then intent-matched offer structure. Running these out of order produces suboptimal results.

Increase +180% conversions from your website with AI

Get more conversions from your existing traffic by delivering personalized experiences in real time.

- Adapt your website to each visitor’s intent automatically

- Increase conversions without redesigns or dev work

- Turn anonymous traffic into revenue at scale