A lead is qualified when there is sufficient evidence that the person or account has both the intent to solve a specific problem and the organizational capacity to buy. In practice, qualification is not a binary state but a score derived from behavioral signals, firmographic fit, and stage-appropriate engagement patterns. Most qualification frameworks collapse under pressure because they conflate data completeness with actual purchase readiness.

The gap between a “qualified” lead in your CRM and a deal that closes is largely explained by how your qualification model was built: whether it was constructed from closed-won data or from intuition, whether it accounts for buying committee dynamics, and whether it tracks behavioral recency rather than just profile attributes. A lead that ticks all the ICP boxes but has not engaged meaningfully in 30 days is not qualified in any operational sense.

Table of Contents

The question of what makes a lead qualified has been asked at every revenue offsite for the past decade. Yet in most organizations, the answer is still a mix of BANT criteria defined in a spreadsheet, a lead score threshold nobody audited in two years, and a loose handoff agreement between marketing and sales that breaks down whenever pipeline is soft.

Several structural shifts have made this problem more acute. Buying committees have expanded: Gartner data consistently shows 6 to 10 stakeholders involved in B2B software purchases above $50k. A single lead who fills out a form is, at best, a signal about one node in a decision network. At the same time, intent data has matured: third-party behavioral signals, product usage data, and content engagement can now be aggregated into qualification models that were not possible five years ago. Finally, pipeline pressure has increased scrutiny on conversion rates at every stage, which means poorly qualified leads no longer just waste sales time; they distort forecasting and inflate CAC. Paid lead generation in particular tends to produce high volume with low buyer intent, which makes downstream qualification accuracy even more critical.

What most marketers misunderstand is that lead qualification is a prediction problem, not a classification problem. The goal is not to decide whether a lead is good or bad. The goal is to estimate the probability of revenue given current evidence, and to determine what additional evidence would most efficiently update that estimate. When you frame it this way, the inadequacy of most qualification systems becomes obvious. Teams that haven’t fully defined their ICP yet face an even more foundational version of this problem.

This article covers how qualification frameworks are actually built in high-performing teams, how to instrument them operationally, what kills accuracy, and where tooling either helps or introduces noise.

The structural problems with common qualification frameworks

BANT was designed for a different sales motion

BANT (Budget, Authority, Need, Timeline) was developed by IBM in the 1960s for outbound field sales. It was built for a world where a single buyer with a capital budget made decisions on a predictable procurement cycle. In modern B2B SaaS, applying BANT as a qualification gate creates several specific problems:

- Budget is rarely confirmed early. Procurement budgets in software are often discretionary and can be created post-discovery if the problem is compelling enough. Filtering on confirmed budget early eliminates high-intent prospects who haven’t formally allocated spend yet.

- Authority is a moving target. The person who fills out a form or attends a webinar is rarely the final economic buyer. Disqualifying because the contact isn’t a VP is a lead source problem, not a lead quality problem.

- Need is the only criterion that actually holds up under scrutiny, and most teams assess it based on self-reported data (form fills, job titles) rather than behavioral evidence.

- Timeline is almost always aspirational in early-stage conversations. Leads that say “Q3” are often pushing to Q1 of next year.

The practical consequence is that BANT-based qualification either sets the bar so high that it kills pipeline velocity, or it gets gamed by reps who mark everything as qualified to avoid scrutiny. Frameworks like MEDDIC, CHAMP, and PACTT were developed specifically to address these gaps in different sales contexts.

Turn website traffic into sales-ready leads

- Identify high-intent visitors automatically

- Qualify leads before they reach your sales team

- Convert traffic without adding friction or forms

Lead scoring models age out faster than they’re updated

Most lead scoring models assign static weights to demographic and behavioral attributes: job title = 10 points, opened email = 5 points, visited pricing page = 15 points. The weights are set once and rarely revisited unless someone flags that conversion rates are dropping.

The structural issue is that these models are almost never validated against closed-won data. They’re built from assumptions about what a good lead looks like, not from statistical analysis of what characteristics actually predict revenue. A properly constructed lead score is a regression model trained on historical outcomes, not a points spreadsheet.

Additionally, most scoring models ignore decay. A lead who visited your pricing page eight months ago and has been dormant since then is carrying a high score in your MAP with no corresponding purchase intent today. Without time-weighted scoring, your MQL pool is polluted with historically interesting but currently cold records.

The MQL/SQL handoff is a proxy metric for a process problem

The MQL to SQL conversion rate is the canonical metric for lead qualification health. But it’s a lagging indicator that obscures root cause. An MQL/SQL conversion rate of 25% could mean:

- Marketing is generating too many low-fit leads (ICP issue)

- Marketing is generating good leads but passing them at the wrong stage (timing issue)

- Sales is disqualifying valid leads because the scoring model doesn’t match their criteria (alignment issue)

- The product or market positioning doesn’t match what buyers actually need (messaging issue)

Without decomposing MQL/SQL conversion by source, persona, and disqualification reason, the metric is not actionable. Most teams track the aggregate number without the diagnostic breakdown. If you’re unsure whether low conversion is a traffic quality problem or a site problem, this breakdown of conversion rate root causes is worth reviewing alongside your qualification analysis.

What actually predicts qualification

Behavioral signals versus profile attributes

There are two categories of qualification signal: profile attributes (what a lead looks like) and behavioral signals (what a lead does). Profile attributes include firmographics, technographics, and contact-level data. Behavioral signals include website activity, content consumption, email engagement, product usage, and third-party intent data.

Profile attributes are necessary but not sufficient. A lead from a 500-person fintech company with a CTO title fits your ICP. That does not mean they’re in-market. Behavioral signals, particularly high-intent behavioral clusters, are stronger predictors of near-term purchase readiness.

High-intent behavioral clusters worth tracking:

- Pricing page visits combined with two or more feature-specific page views in a single session

- Return visits within 7 days of initial conversion

- Multiple contacts from the same account engaging independently (buying committee activation)

- Direct navigation to case studies or ROI calculators from non-branded search

- Demo or trial request combined with any firmographic fit signal

The key insight is that combination matters more than individual actions. A single pricing page visit is weak. A pricing page visit, followed by a case study in the same vertical, followed by a return visit four days later, from a contact at a company that matches your ICP, is materially different.

Account-level versus contact-level qualification

Most qualification logic operates at the contact level. This is a mismatch with how B2B buying actually works. Qualification should be assessed at the account level first, then the contact level.

Account-level qualification asks: is this company in a position to buy? Contact-level qualification asks: is this person positioned to influence or drive a decision?

This has direct implications for your scoring model:

- Account score should incorporate firmographic fit, technographic signals (existing stack compatibility), intent topic clusters from third-party sources, and any prior engagement history from the account (not just the current contact)

- Contact score should incorporate role relevance, engagement recency and depth, and relationship to the buying committee

When an account has multiple contacts engaging simultaneously, that is a qualification upgrade event regardless of individual contact scores. Most MAPs don’t model this out of the box.

Intent data: what it adds and where it misleads

Third-party intent data (from providers like Bombora, G2, or TechTarget) tracks behavioral signals outside your own properties: content consumption, category research, competitor comparisons. It adds genuine qualification signal in specific conditions:

- When you have no first-party data on a named account and need to prioritize outreach

- When account-level intent clusters align with your product category and timing is constrained (fiscal year end, renewal windows)

- When combined with first-party engagement to increase confidence

Where intent data misleads:

- It is aggregated and lagged. Most intent data providers deliver weekly or bi-weekly batches. An “in-market” signal from last Tuesday means the account was researching your category then. It doesn’t mean they are now.

- Intent data has false positive rates that vary by category. In broad categories (security, analytics), intent signals are diluted because the category is always researched by someone. Narrow categories improve signal quality.

- Intent data cannot tell you which contact at the account is doing the research, in most cases. You’re working with account-level signals and inferring contact-level relevance.

Use intent data to prioritize prospecting and to supplement first-party qualification signals. Do not use it as a primary qualification gate. For a direct comparison of intent platforms against traditional lead tools, see intent data platforms vs traditional lead generation tools.

Building a qualification model that actually works

Step 1: Define the outcome variable first

Before building any scoring logic, define what you’re predicting. The most common choices are:

- Opportunity created (lead to pipe): useful if your pipeline creation is the bottleneck

- Opportunity closed-won (lead to revenue): more accurate but requires longer training windows

- Time to close (velocity): useful for prioritization within an already-qualified pool

Most teams should optimize for closed-won, not opportunity creation. Optimizing for opportunity creation rewards sales acceptance, not revenue. These are not the same thing.

Step 2: Audit closed-won data for actual qualification patterns

Pull your last 12 to 24 months of closed-won accounts and map backward. This process is closely related to predictive lead scoring, which formalizes the same logic using machine learning on historical outcome data:

- What was the firmographic profile at first touch?

- What behavioral signals appeared in the 30 days before opportunity creation?

- How many contacts from the account were engaged before the opportunity was created?

- What was the average time from MQL to close for different ICP segments?

This analysis almost always surfaces surprises. Common findings include: small accounts convert faster but at lower ACV; mid-market accounts from specific verticals have significantly higher win rates; certain behavioral clusters (e.g., two or more contacts engaging within the same week) predict pipeline velocity accurately.

Step 3: Build a tiered scoring model, not a single threshold

A single MQL threshold is a blunt instrument. A tiered model assigns leads to different qualification buckets that map to different sales motions:

Tier | Criteria | Sales motion |

T1: Hot | High ICP fit + high behavioral intent + multi-contact engagement | Immediate SDR outreach, same day |

T2: Warm | High ICP fit + moderate behavioral intent OR single high-intent action | Nurture + SDR outreach within 48h |

T3: Developing | Moderate ICP fit + some behavioral engagement | Automated nurture, re-score on trigger |

T4: Low fit | Low ICP fit regardless of behavior | Marketing-only, no SDR allocation |

The thresholds for each tier should be calibrated against your closed-won data, not set arbitrarily.

Step 4: Build decay logic into the model

Every behavioral signal should carry a half-life. Engagement from 90 days ago should carry less weight than engagement from last week. A practical implementation:

- Email open: full weight if within 14 days, half weight if 15 to 45 days, zero weight after 45 days

- Page visit: full weight if within 7 days, half weight if 8 to 21 days, zero weight after 30 days

- Content download: full weight if within 21 days, half weight if 22 to 60 days, zero weight after 90 days

Profile attributes (firmographic fit) do not decay. A company’s size and vertical don’t change month to month. Only behavioral signals need decay logic.

Step 5: Validate the model against holdout data

Once built, test your qualification model against a holdout set of records from a period not used in training. Measure:

- Precision: of leads scored as T1, what percentage converted to closed-won?

- Recall: of all closed-won deals, what percentage were captured at T1 or T2?

- Lift: how much more likely is a T1 lead to close compared to a random lead in your database?

If precision is high but recall is low, your model is too conservative (missing good leads). If recall is high but precision is low, your model is too permissive (passing too many weak leads). Most teams should optimize for precision when sales capacity is the constraint, and recall when pipeline is the constraint.

Common mistakes in operational qualification

- Over-indexing on form fills. A gated asset download tells you someone wanted the content. It does not tell you they have a problem your product solves. Form fills are top-of-funnel engagement signals, not qualification signals. They need to be combined with behavioral depth and firmographic fit before they carry qualification weight.

- Using job title as a qualification proxy for authority. A VP title at a 20-person startup and a VP title at a 5,000-person enterprise represent entirely different buying authority and process complexity. Title without context is a weak signal.

- Qualifying individual contacts in isolation from account context. If three contacts from the same account have each hit your website independently in the past two weeks, that is a stronger signal than one contact with a perfect score acting alone. Account-level activity aggregation is not optional in ABM or complex-sale environments.

- Running qualification logic only on inbound leads. Outbound prospects that receive personalized sequencing and engage with it are exhibiting qualification signals that most MAPs don’t capture. Your qualification model should run across all lead sources, not just inbound form fills.

- Treating disqualification as a permanent state. A lead disqualified because of timing six months ago should be re-entered into nurture with a re-scoring trigger. Long sales cycles and budget cycles mean many leads become qualified on a cadence that doesn’t match your original outreach timing.

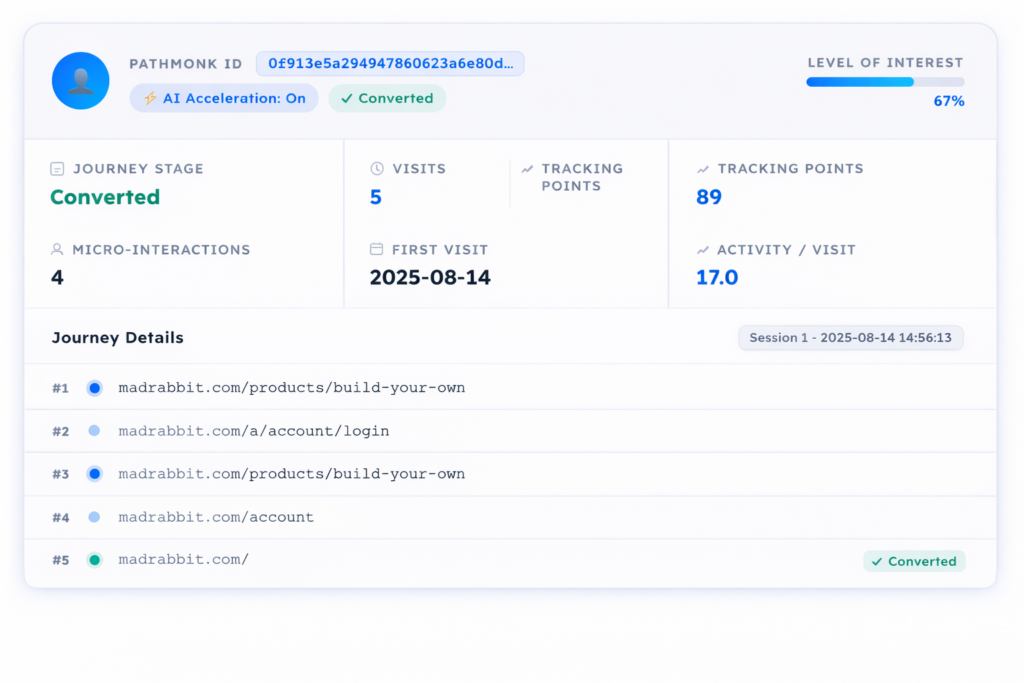

How Pathmonk increases qualified leads from your existing traffic

Pathmonk operates at the pre-conversion layer of the website funnel. It uses a cookieless behavioral tracking methodology to build intent profiles based on how visitors navigate content, how they progress through the site architecture, and how their engagement patterns align with historically observed paths to conversion.

Specifically, the system:

- Tracks content consumption sequences (not just individual page visits) to identify intent patterns

- Compares live visitor behavior against conversion-path models built from historical data on your specific site — learn more about how Pathmonk detects the stage of the customer journey

- Serves adaptive content based on inferred intent stage, to move visitors toward conversion actions without requiring a form fill — this is what powers Pathmonk’s microexperiences

- Scores anonymous visitors on intent probability before they self-identify — using fingerprint technology rather than cookies

When a lead does convert, Pathmonk passes intent signals and behavioral history into the CRM or MAP, enriching the contact record with pre-conversion behavioral data that standard tracking misses.

Sales receives leads with a richer pre-conversion context: not just “filled out contact form” but a behavioral trail showing what content they consumed, in what order, and how that pattern aligns with high-intent historical profiles. This changes the first conversation from “how did you hear about us” to a more informed opening based on what the prospect was actually researching. For B2B teams, the B2B intent leads generation add-on extends this further by surfacing which companies are visiting before they ever fill out a form.

For marketing, the practical change is that lead scores become more accurate on first contact. Rather than assigning a baseline score based solely on firmographic fit and the conversion action, the score incorporates a fuller behavioral picture. Leads that superficially look similar (same title, same company size, same asset download) can be differentiated based on their pre-conversion behavior. You can also use qualification flows to add an interactive layer that filters intent even further at the moment of conversion.

Teams using Pathmonk typically observe improvement in two specific metrics:

- MQL to SQL conversion rate, because the behavioral enrichment reduces false positives in the MQL pool

- Sales-accepted lead rate, because reps receive more context and are less likely to immediately disqualify leads that lack verbal confirmation of intent

The mechanism is behavioral enrichment, not lead generation. The number of leads doesn’t change. The quality of information available to score and route them does.

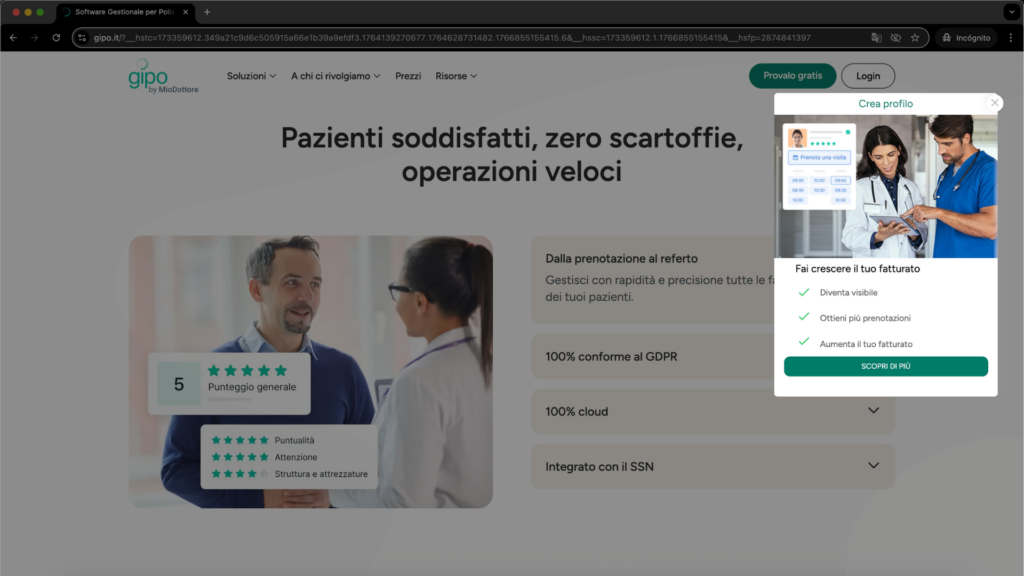

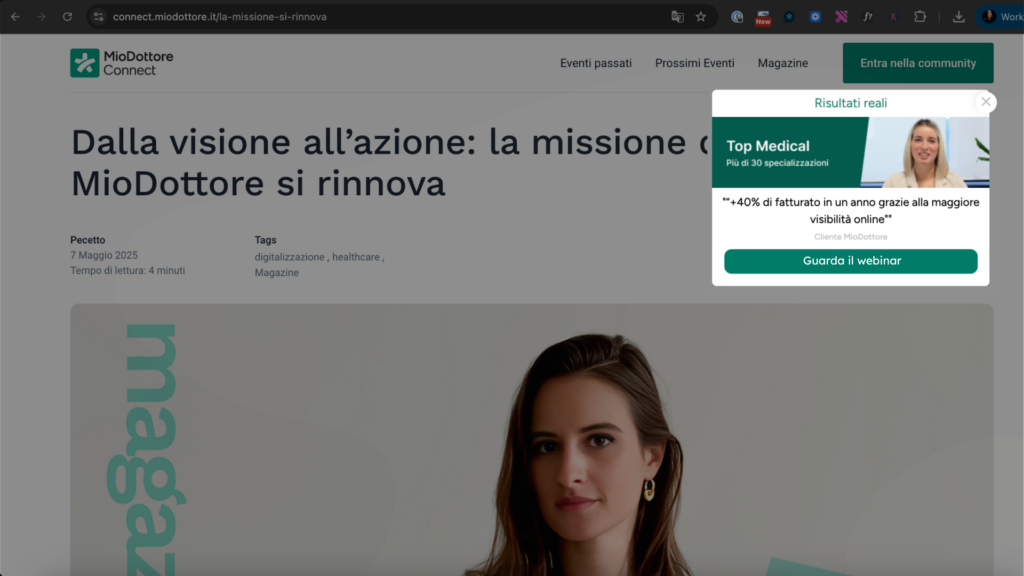

How Doctoralia increased qualified B2B leads by 82% with intent-based microexperiences

Doctoralia is a healthcare platform operating across both B2C and B2B markets. Their B2B websites — aimed at clinics and healthcare providers — were generating traffic, but conversion into qualified demo requests was falling short. The problem wasn’t volume. It was that high-intent visitors weren’t being guided toward the demo form quickly or clearly enough, so they left without taking action. No page redesign or additional ad spend was on the table; Doctoralia needed a way to help the right visitors act at the right moment without disrupting the experience for everyone else.

They ran a one-month pilot with Pathmonk across three markets — Mexico, Colombia, and Italy — using the tool’s 50/50 A/B split to keep measurement clean. Pathmonk detected where each visitor was in the buying journey and served different microexperience content accordingly. Visitors in earlier stages received messaging suited to their level of consideration; those showing high-intent signals were guided more directly toward the demo request. The content adapted to the visitor, not the other way around.

Within 14 days, the results across all three markets averaged a +82% increase in qualified B2B leads. Colombia led at +134%, Italy came in at +80%, and Mexico at +32%. Every point of lift was attributed purely to on-site behavior change — the control group ran the standard site experience in parallel, making the comparison direct and clean.

Following the trial, Doctoralia expanded into a multi-goal strategy: a dedicated Pathmonk account on high-intent pages (pricing, solution pages) focused on demo requests, and a separate account on lower-intent sections like the blog focused on webinar sign-ups. This separation keeps intent signals from mixing and attribution from blurring — a practical example of what “qualified” actually means operationally: matching the conversion ask to where in the journey the visitor actually is, not where you wish they were.

FAQ

What is the difference between a qualified lead and a sales-ready lead?

A qualified lead meets the threshold criteria for your ICP and has demonstrated sufficient intent to warrant sales engagement. A sales-ready lead meets those criteria and is at a stage in their buying process where a sales conversation adds value. The distinction matters operationally: a qualified lead that’s still in early research mode may need nurture rather than an immediate discovery call. For a step-by-step view of how to qualify leads operationally, including the decision points between stages, that post covers the process in detail. Sending sales too early kills deals that marketing built.

How do you handle qualification in a PLG (product-led growth) motion?

In PLG, the product itself generates the primary qualification signal. The key metrics shift from behavioral web signals to in-product usage: time to activation, feature adoption depth, number of users provisioned, and upgrade intent signals (attempted access to paid features). Traditional MQL models don’t apply. Instead, PQL (Product Qualified Lead) frameworks use product usage thresholds calibrated against closed-won expansion data. BANT-style qualification is largely irrelevant in this motion; the product behavior is the qualification. See product-led growth examples for how top PLG companies operationalize this.

Should marketing or sales own the qualification criteria?

Neither should own it independently. Qualification criteria should be set jointly and validated against closed-won data owned by revenue operations. In practice, marketing tends to set MQL criteria optimized for volume, and sales tends to set SQL criteria optimized for call efficiency. Neither perspective fully captures what predicts revenue. RevOps or a dedicated GTM analytics function should own the model, with input from both sides.

Can lead scoring work without a large historical dataset?

At low data volumes (fewer than 200 closed-won deals), regression-based scoring is statistically fragile. In that case, use a rules-based model grounded in ICP criteria and known high-intent behavioral signals, and treat it explicitly as a hypothesis rather than a validated model. Revisit it as you accumulate data. Running a model with false statistical confidence is worse than acknowledging its limitations. These lead qualification tools can help automate parts of the process even at lower data volumes.

How does MQL definition vary by company stage?

At early stage (pre-Series B), ICP is often still being discovered, and MQL thresholds should be set low to avoid missing signal. At growth stage, precision matters more because sales capacity is a real constraint and poor leads have a high opportunity cost. At scale, qualification models need to handle multiple segments and motions simultaneously. The same scoring logic doesn’t apply across all stages.

Does adding more qualification gates improve pipeline quality?

Not linearly. Adding gates reduces volume but doesn’t automatically improve the quality of what passes through. Gates only improve quality if they’re set at the right thresholds, which requires validation against closed-won data. Gates set arbitrarily often filter out legitimate buyers who don’t fit the assumed profile while letting through leads that look right on paper but have low actual intent.

Key takeaways

- Qualification is a probability estimate, not a binary classification. Model it accordingly.

- BANT was designed for a different buying environment and breaks down in multi-stakeholder, non-linear B2B buying motions.

- Lead scoring models built on assumptions rather than closed-won data generate systematically biased MQL pools.

- Behavioral signals, particularly behavioral clusters and multi-session engagement patterns, predict purchase intent more accurately than profile attributes alone.

- Account-level qualification logic is required in any complex-sale environment. Contact-level scoring in isolation misses buying committee activation.

- Intent data from third-party providers adds signal for prospecting prioritization but is not reliable as a standalone qualification gate due to latency and category breadth.

- Decay logic is non-optional. A static scoring model without time-weighted signals produces stale MQL pools.

- False positive rates of 30 to 55% in enterprise MQL models are common but not inevitable. Properly validated models can bring this below 20%.

- Pre-conversion behavioral enrichment (as provided by tools like Pathmonk) fills the data gap between anonymous engagement and post-form-fill qualification.

- Over-qualification has a cost that’s harder to see than under-qualification, but it’s real and should be measured.

- MQL/SQL conversion rate as a standalone metric is insufficient for diagnosing qualification health. Decompose it by source, persona, and disqualification reason.