CRO tools are worth paying for when they either generate net new insight you cannot otherwise access or enable you to execute controlled change at scale. Tools that only replicate what can be achieved through existing analytics, internal dashboards, or manual analysis rarely justify incremental spend.

For experienced marketing teams, the tools that consistently deliver ROI fall into five categories: behavioral analytics, experimentation platforms, session intelligence, personalization engines, and data orchestration layers. The decision is not about feature depth alone. It is about whether the tool changes your decision velocity, test coverage, segmentation precision, or revenue impact per visitor in a measurable way.

Table of Contents

CRO software spend has increased significantly over the last five years. At the same time, conversion rates across industries have largely stagnated. Many marketing teams now operate with overlapping tools that generate reports but do not change outcomes.

This question matters now for three reasons:

- Traffic costs are rising, especially in paid channels.

- Privacy restrictions reduce targeting precision, increasing reliance on on-site optimization.

- AI-driven tooling has lowered the barrier to launching “CRO features”, making vendor claims harder to evaluate.

What has changed is not the importance of CRO. It is the economics. Buying more tools no longer compensates for weak experimentation culture or poor data integration.

What most marketers misunderstand is this: CRO tools do not improve conversion rates by default. They increase the probability of improvement if you already have:

- Clear hypotheses

- Reliable tracking

- Statistical rigor

- Operational capacity to ship changes

In this article we’ll break down which CRO tool categories actually drive measurable impact, when tools are redundant, trade-offs between in-house vs vendor solutions and where traditional CRO tools fail.

Get your website’s conversion score in minutes

- Instant CRO performance score

- Friction and intent issues detected automatically

- Free report with clear next steps

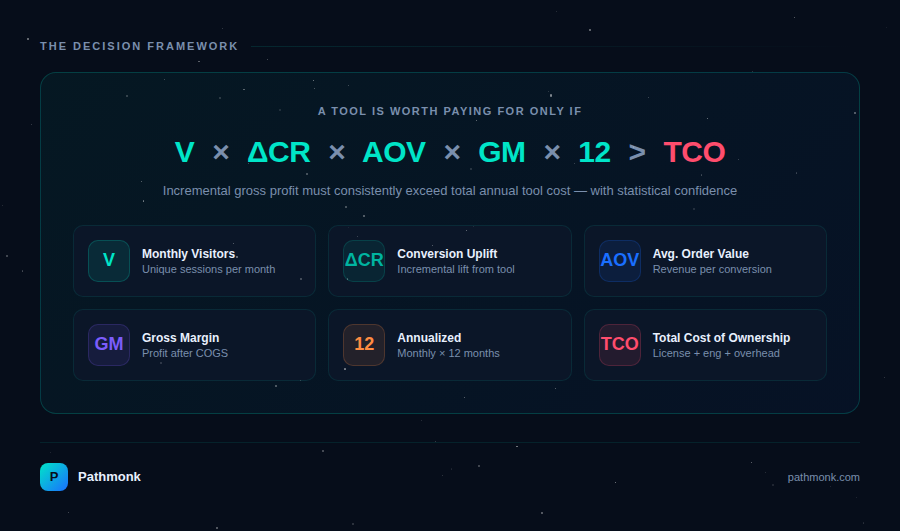

The economic framework for evaluating CRO tools

Before reviewing categories, we need a decision framework.

A CRO tool is worth paying for if: Incremental profit from conversion uplift > Total cost of ownership

Total cost of ownership includes:

- License cost

- Implementation time

- Engineering resources

- Data pipeline complexity

- Experiment management overhead

- Opportunity cost

The key is isolating incremental impact.

Let:

- V = Monthly unique visitors

- CR = Conversion rate

- AOV = Average order value

- GM = Gross margin

- ΔCR = Conversion uplift

- TCO = Total annual tool cost

Annual incremental gross profit: V × ΔCR × AOV × GM × 12

If that number consistently exceeds TCO with statistical confidence, the tool is financially justified. The problem is most tools cannot isolate ΔCR clearly because:

- Attribution models are inconsistent

- Experiments are underpowered

- Personalization is not randomized

- Analytics are not unified

So tool value depends heavily on implementation rigor. If you’re still asking whether CRO is worth the investment at all, that’s a good baseline to work through before evaluating individual tools.

Core CRO tool categories that are actually worth paying for

To evaluate CRO tooling rigorously, each category should be assessed using the same dimensions:

- What it does

- What changes structurally

- When it is worth paying for

- When it is not

- Implementation requirements

- Common failure modes

This avoids feature-driven buying decisions and forces operational clarity.

1. Behavioral analytics platforms

Examples: Mixpanel, Amplitude, Heap, GA4 (partially), custom event-based warehouses.

What it does

Behavioral analytics platforms move beyond session-level reporting and pageviews. They operate on structured event data, allowing analysis of user actions across sessions and identities. This includes funnel analysis, cohort retention, path exploration, and event correlation modeling.

Unlike traffic analytics tools, they are built around user-level progression, not channel attribution.

What changes structurally

When implemented properly, these platforms shift CRO from surface-level optimization to behavioral diagnosis. Instead of asking “Which page converts better?”, teams can ask:

- Which event sequences predict conversion?

- Where does activation stall?

- Which segments churn early?

- Which behaviors correlate with higher LTV?

This changes prioritization. Experiments become behavior-driven rather than layout-driven. This is closely related to what behavioral data actually means in a marketing context and how it should inform decisions.

When it is worth paying for

Behavioral analytics tools are justified when:

- The product has multi-step onboarding

- Conversion spans multiple sessions

- Activation events determine monetization

- There are multiple ICPs with different behavioral patterns

- Retention impacts revenue significantly

They are particularly valuable in product-led growth, freemium SaaS, or B2B flows with extended research cycles.

When it is not

They are not necessary for:

- Single-page lead generation

- Simple brochure websites

- Very low traffic environments

- Teams without event governance discipline

In those cases, advanced segmentation adds complexity without changing decisions.

Implementation requirements

The tool only becomes valuable with:

- A well-defined event taxonomy

- Strict naming conventions

- Identity stitching across sessions

- Clearly defined primary and secondary KPIs

- Governance for tracking updates

Without these, the platform becomes a high-cost reporting interface layered on top of inconsistent data.

Common failure modes

The most common breakdowns are:

- Event sprawl with inconsistent naming

- Redundant tracking between product and marketing

- No ownership of analytics architecture

- Using event tools for vanity reporting instead of behavioral modeling

The tool does not create insight. The data model does.

2. Experimentation platforms

Examples: Optimizely, VWO, AB Tasty, server-side frameworks, feature flag systems.

What it does

Experimentation platforms enable randomized controlled testing across traffic segments. They manage traffic allocation, variant deployment, statistical modeling, and experiment reporting. Advanced systems support server-side testing, feature flags, and progressive rollout.

The key capability is causal validation.

What changes structurally

With a mature experimentation platform, decision-making shifts from opinion or heuristics to controlled validation. It enables:

- Isolation of variable impact

- Measurement of incremental lift

- Segmented performance evaluation

- Iterative optimization cycles

It increases test velocity and enforces discipline around hypothesis documentation and outcome analysis. For a deeper look at how CRO testing drives growth through experimentation, the principles behind proper test design matter as much as the platform itself.

When it is worth paying for

The economics work when:

- Monthly traffic exceeds statistical viability thresholds

- There is a structured experimentation roadmap

- Engineering can support deeper implementation

- Experiments influence revenue, not just UI polish

High-traffic ecommerce and mid-to-late stage SaaS benefit most.

When it is not

It becomes inefficient when:

- Traffic volume is insufficient to detect meaningful lift

- There is no experimentation owner

- Tests are cosmetic rather than structural

- Organizational politics override statistical outcomes

In these cases, the bottleneck is not tooling but culture and governance. This is also the core argument behind why CRO may not be worth it for small sites with low traffic.

Implementation requirements

A paid experimentation platform requires:

- Defined minimum detectable effect targets

- Sample size calculation discipline

- Pre-registered hypotheses

- Clear primary success metrics

- Segment-aware reporting

Client-side tools allow faster deployment but introduce rendering risk and limited personalization logic. Server-side testing is more reliable but requires engineering capacity.

Common failure modes

Frequent breakdowns include:

- Underpowered experiments

- Excessive variant testing without traffic scaling

- Ignoring segment-level lift dilution

- Accepting platform-generated statistical outputs without internal validation

A testing platform is only as rigorous as the experimentation framework behind it.

3. Session intelligence tools

Examples: Hotjar, FullStory, Microsoft Clarity, Contentsquare.

What it does

Session intelligence platforms provide qualitative visibility into user interactions through recordings, heatmaps, rage click detection, and friction analysis. They surface anomalies in interaction patterns that are not visible in aggregate analytics.

Their core function is diagnostic observation, not causal measurement.

What changes structurally

They reduce blind spots in UX decision-making. Instead of relying on bounce rate alone, teams can observe:

- Field-level form abandonment

- Repeated interaction failures

- Navigation confusion

- JavaScript errors affecting conversion

They shorten time to friction identification.

When it is worth paying for

Paid tools are justified when:

- Checkout flows are complex

- Multi-step forms affect revenue

- UX friction materially impacts conversion

- The business model depends on micro-interactions

High-revenue ecommerce environments often justify deeper tools like Contentsquare.

When it is not

For small sites or low-complexity funnels, free tools provide sufficient visibility. Paying for advanced behavioral overlays rarely changes outcomes if friction is obvious. It’s also worth noting that heatmaps alone are frequently overrated as a standalone diagnostic.

Implementation requirements

To generate value:

- Recordings must be segmented by conversion outcome

- Insights must feed directly into test hypotheses

- UX reviews should follow structured observation criteria

Without integration into experimentation workflows, session tools become anecdotal evidence generators.

Common failure modes

Typical misuse includes:

- Cherry-picking extreme sessions

- Generalizing from outliers

- Ignoring traffic segmentation

- Treating heatmaps as performance indicators

They support diagnosis. They do not validate solutions.

4. Personalization engines

Examples: Dynamic content systems, recommendation engines, intent-based personalization platforms.

What it does

Personalization engines modify the website experience dynamically based on visitor attributes, behavior, or predicted intent. This can include CTA switching, messaging adjustments, testimonial rotation, or contextual funnel routing.

The advanced layer involves real-time intent modeling rather than static rule-based segmentation.

What changes structurally

Personalization shifts optimization from average-based improvement to segment-responsive performance. Instead of testing one universal variant, the system adapts per visitor cluster.

This increases coverage across heterogeneous traffic and reduces reliance on manually managed segment experiments. Website personalization’s direct impact on sales makes this one of the higher-leverage categories when implemented correctly.

When it is worth paying for

Personalization is economically justified when:

- Traffic consists of multiple ICPs

- Intent varies significantly by channel

- Buying stages differ across segments

- Static messaging creates performance dilution

The value scales with traffic diversity.

When it is not

It underperforms when:

- Traffic is homogeneous

- Conversion paths are simple

- Volume is too low to model behavior reliably

- There is no defined primary conversion metric

Personalization without segmentation precision creates noise.

Implementation requirements

Effective deployment requires:

- Reliable real-time behavioral inputs

- Segment validation against downstream metrics

- Integration with experimentation logic

- Clear revenue attribution

Without randomized holdouts, perceived lift can be inflated.

Common failure modes

Common issues include:

- Rule-based personalization mistaken for predictive modeling

- Over-segmentation with insufficient data

- Conflicting personalization layers across tools

- No downstream revenue tracking

Personalization must connect to revenue per visitor, not just click-through rate.

5. Data orchestration and CDPs

Examples: Segment, RudderStack, mParticle, warehouse-native pipelines.

What it does

Data orchestration platforms unify behavioral, CRM, attribution, and lifecycle data into structured pipelines. They enable consistent event streaming across marketing, product, and analytics tools.

Their value lies in data consistency and distribution, not conversion improvement directly.

What changes structurally

A CDP reduces fragmentation. It ensures:

- Consistent event definitions

- Centralized identity resolution

- Reliable audience activation

- Unified segmentation logic

Without orchestration, CRO tools operate on partial data.

When it is worth paying for

Investment is justified when:

- Multiple tools require synchronized behavioral data

- Advanced personalization relies on CRM attributes

- Lifecycle marketing spans multiple channels

- Attribution and CRO require shared data layers

Enterprise B2B environments benefit most.

When it is not

Early-stage companies with simple stacks often add complexity without ROI. If there are fewer than five integrated systems, orchestration may be premature.

Implementation requirements

To generate value:

- Event schema must be standardized

- Data governance ownership must be defined

- Latency must meet real-time personalization needs

- Identity stitching must be reliable

A CDP without governance creates additional inconsistency.

Common failure modes

Frequent breakdowns include:

- Treating the CDP as a strategy rather than infrastructure

- Over-engineering pipelines before validating use cases

- Building audience segments that are never activated

Orchestration amplifies existing discipline. It does not create it.

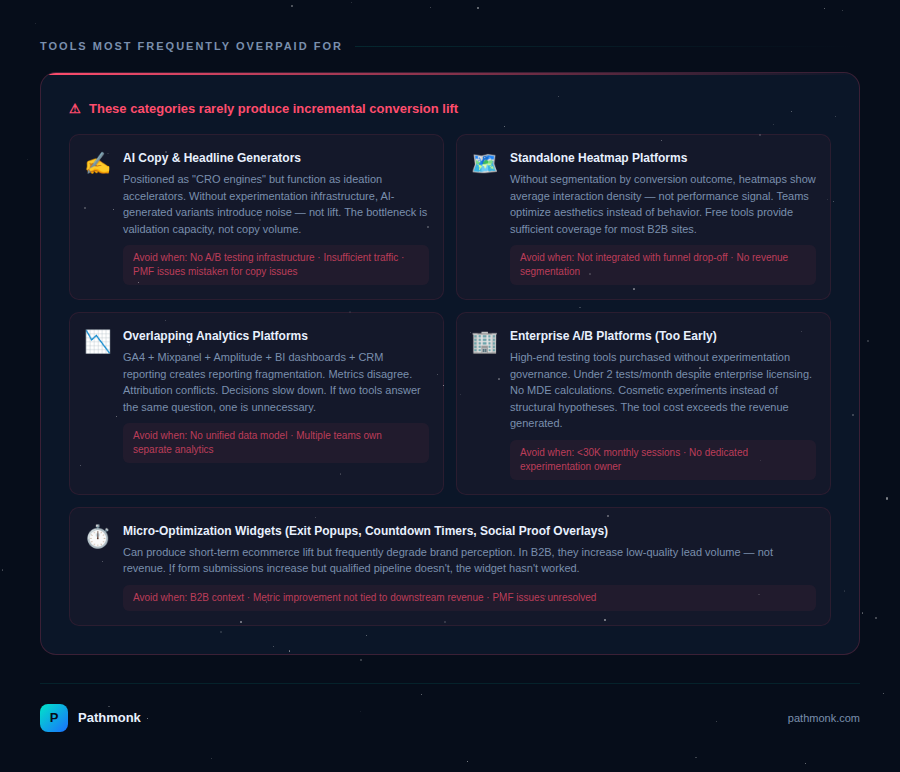

CRO tools that are often overpaid for

Not every tool marketed under the CRO category produces incremental conversion lift. Some generate insight that could be derived elsewhere. Others create reporting layers without changing decision quality. In many stacks, overpayment comes from redundancy, not from absolute cost.

Below are the categories most frequently overvalued relative to their structural impact.

1. AI copy and headline generators positioned as “CRO engines”

Many AI copy platforms position themselves as conversion optimization tools because they can generate multiple variants of headlines, CTAs, or value propositions. In practice, most of these tools function as ideation accelerators rather than optimization systems.

Generating variants is not equivalent to improving conversion rate. The value of a headline depends on audience alignment, traffic source intent, funnel stage, and downstream qualification. Without controlled testing and statistically valid evaluation, AI-generated copy introduces noise rather than improvement.

In experienced teams, copy iteration is rarely the bottleneck. The constraint is validation capacity and prioritization logic. If a team already runs structured experiments, AI-generated variants can accelerate creative throughput. If they do not, the tool becomes a productivity enhancer for content teams, not a CRO lever.

These tools are often overpaid for when:

- There is no experimentation infrastructure to validate outputs.

- Traffic volume is insufficient to test multiple variants.

- Messaging misalignment is structural rather than phrasing-based.

- Product-market fit issues are mistaken for copy issues.

In these cases, incremental revenue lift does not justify recurring subscription cost.

2. Standalone heatmap tools without behavioral integration

Heatmaps and scroll maps are useful for visualizing interaction concentration. However, many organizations purchase advanced heatmap platforms that are not integrated with segmentation logic or event analytics.

A heatmap without segmentation shows average interaction density. It does not distinguish between converting and non-converting users. It does not isolate friction points across segments. It does not reveal causality.

The result is often aesthetic optimization rather than performance optimization. Teams move elements based on visual density rather than measured behavioral impact. There are also strong arguments for a heatmap alternative when behavioral integration is lacking.

When session intelligence is not connected to:

- Funnel drop-off analysis,

- Experimentation frameworks,

- Revenue segmentation,

the tool becomes observational rather than actionable.

In high-traffic ecommerce with complex layouts, advanced visual analytics can justify cost. In most B2B environments, especially those with simple page structures, free tools provide sufficient qualitative visibility.

Overpayment occurs when visual insight is mistaken for performance leverage.

3. Overlapping analytics platforms

A common scenario in mid-sized marketing organizations is the coexistence of multiple analytics tools: GA4 for traffic, Mixpanel for product events, Amplitude for retention analysis, internal BI dashboards for revenue, and CRM reporting for lifecycle metrics.

In theory, each tool serves a distinct purpose. In practice, there is often redundancy in funnel reporting and event analysis. When multiple teams own separate analytics platforms without a unified data model, reporting fragmentation increases.

The financial cost is visible. The operational cost is less obvious. Decision-making slows because metrics disagree. Attribution differs between systems. Experiment results cannot be reconciled with CRM data.

Overpayment here is not about tool quality. It is about tool overlap. If two systems answer the same question with different schemas, one of them is unnecessary. This is part of a broader problem with the best marketing analytics tools — owning several of them simultaneously often creates more noise than clarity.

The correct approach is to define:

- The system of record for revenue.

- The system of record for behavioral events.

- The experimentation validation layer.

Any tool outside those three layers must justify its existence through unique insight, not convenience.

4. Enterprise experimentation platforms without experimentation maturity

High-end experimentation tools are frequently purchased by organizations that lack experimentation governance. These platforms provide advanced statistical models, segmentation layers, and server-side testing capabilities. However, without a structured experimentation roadmap and ownership, their capabilities remain unused.

Common patterns include:

- Fewer than two tests per month despite enterprise licensing.

- Cosmetic UI experiments rather than structural hypotheses.

- No documented minimum detectable effect calculations.

- No segment-level analysis beyond overall lift.

In such cases, the platform cost exceeds the incremental revenue generated. The issue is not the tool’s sophistication. It is the absence of operational maturity.

For companies below a certain traffic threshold or without dedicated experimentation leadership, lightweight or in-house testing frameworks may generate higher ROI.

Paying for advanced statistical infrastructure without test velocity creates negative return on tooling investment.

5. Micro-optimization widgets with unclear revenue linkage

Urgency counters, countdown timers, social proof widgets, and similar overlays are often sold as quick conversion wins. While they can produce short-term lift in certain contexts, they frequently degrade brand perception or distort user behavior. The evidence on whether exit-intent popups are still effective or simply outdated is increasingly mixed.

In B2B, these tactics often increase low-quality lead volume rather than revenue. If form submissions increase but qualified pipeline does not, the widget has not improved performance.

Overpayment occurs when surface-level metric improvement is not connected to downstream qualification or revenue per visitor.

The evaluation criteria must always return to incremental profit, not micro-metric shifts.

Implementation decision tree

Before buying any CRO tool, answer:

- Do we have reliable event tracking?

- Do we have a defined primary KPI?

- Can we run statistically valid experiments?

- Do we have traffic volume to justify testing?

- Is this tool replacing manual work or duplicating it?

- Does it integrate with our existing data layer?

- Can we measure incremental lift clearly?

If more than three answers are no, fix infrastructure before buying.

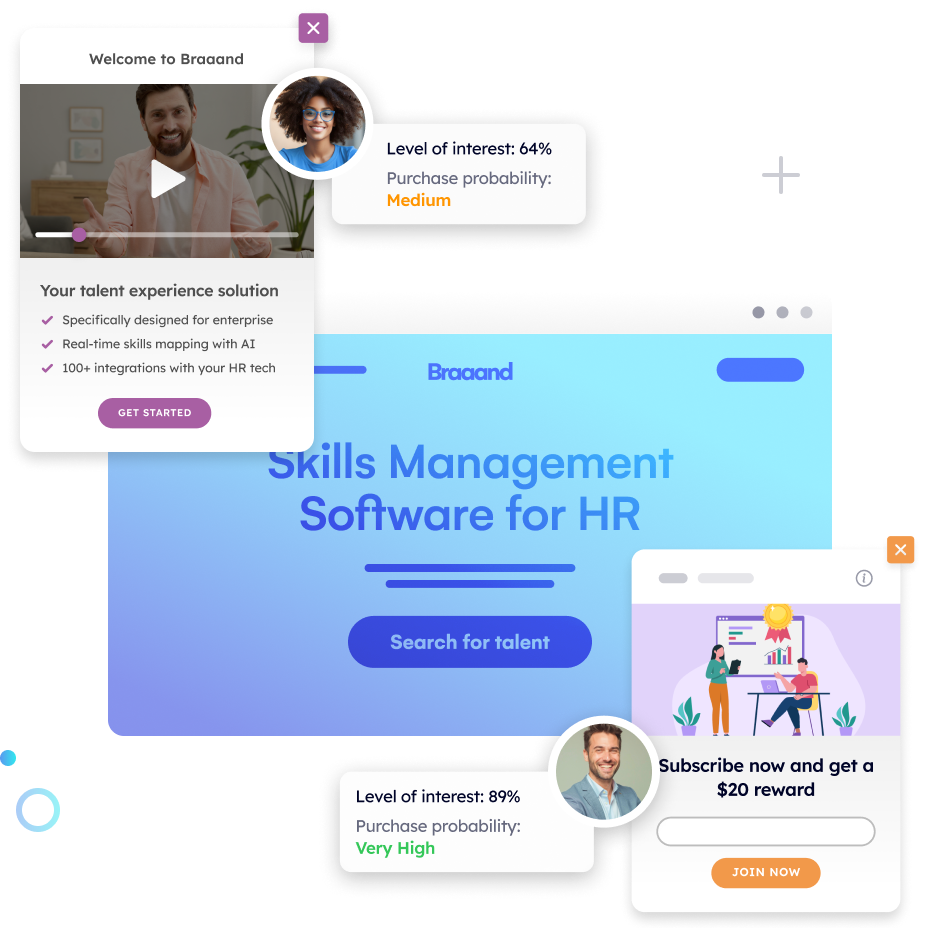

Why Pathmonk is the only CRO tool you need in your stack

If you strip CRO down to its economics, a tool is worth paying for if it increases revenue per visitor without increasing operational complexity. Most tools either improve diagnosis or improve validation. Very few improve performance directly at scale without multiplying workload.

Pathmonk sits in a different position in the stack. It does not just help you analyze behavior or test variants. It changes how your website adapts to visitor intent in real time, without requiring rule-building, redesigns, or constant experiment management.

As we’ve seen, even mature teams face the same constraints:

- Traffic is heterogeneous across channels and ICPs.

- Intent varies significantly within the same campaign.

- Manual segmentation does not scale.

- A/B tests are sequential and limited by traffic volume.

- Static pages optimize for averages.

You can build manual personalization rules. You can run segmented experiments. But coverage remains limited. There are simply too many combinations of visitor attributes, traffic sources, and behavioral signals to manage manually.

As a result, most companies under-optimize mid-intent traffic. High-intent visitors convert anyway. Low-intent visitors bounce. The real opportunity sits in the middle, where small contextual adjustments can change outcomes. That is exactly the segment static CRO struggles to handle efficiently. It is also why visitors who aren’t ready to book a call represent one of the most underleveraged segments in most funnels.

Pathmonk introduces automated, intent-based personalization at the micro-experience level.

Technically, it:

- Analyzes in-session behavioral signals in real time.

- Predicts visitor intent based on engagement patterns.

- Dynamically adapts specific elements of the experience.

The key point is that you do not define manual rules like “If traffic source = LinkedIn and page = pricing then show variant B.” The system continuously interprets behavior and adjusts the experience accordingly.

One of the strongest arguments for adopting Pathmonk is that it does not require structural overhauls. You do not need to:

- Rebuild your website.

- Redesign core pages.

- Rewrite your entire messaging framework.

- Replace your analytics stack.

- Replace your experimentation platform.

- Create dozens of personalization rules.

It works as a layer that integrates with your existing site and tools.

Operationally, that reduces adoption friction. Financially, it reduces total cost of ownership relative to tools that require heavy engineering involvement.

Increase +180% conversions from your website with AI

Get more conversions from your existing traffic by delivering personalized experiences in real time.

- Adapt your website to each visitor’s intent automatically

- Increase conversions without redesigns or dev work

- Turn anonymous traffic into revenue at scale

FAQ on CRO tools worth integrating

At what traffic volume does an experimentation tool become viable?

Using traditional CRO tools, generally above 30,000 monthly sessions for meaningful tests, assuming a typical minimum detectable effect between 5 and 10 percent relative lift. Below that, minimum detectable effect sizes become too large to isolate incremental gains within reasonable timeframes. If your primary conversion rate is low, required sample sizes increase further, extending experiment duration. In low-traffic environments, qualitative research and structural improvements often produce better ROI than formal multivariate testing programs. If you’re using Pathmonk, we recommend at least 10,000 visitors/month, though you can see results with way lower traffic.

Can GA4 replace a behavioral analytics platform?

Partially for basic funnel visualization and high-level event tracking. However, GA4 lacks the depth of cohort retention analysis, behavioral path modeling, and flexible event segmentation that tools like Pathmonk, Mixpanel or Amplitude provide. Its reporting model is still heavily session-oriented rather than user-lifecycle oriented. For product-led growth or multi-session conversion journeys, dedicated behavioral analytics platforms typically offer more actionable granularity and cleaner user-level analysis. For more on what lies beyond GA4 as analytics evolves, the landscape is shifting quickly.

Is AI-based CRO reliable without large datasets?

Predictive systems improve with volume and behavioral diversity. Low-traffic sites may not generate sufficient signal density for strong intent modeling or stable prediction accuracy. In such cases, AI-driven systems may revert to simpler heuristic logic. Reliability increases when models are trained on large datasets, retrained frequently, and validated against downstream business metrics rather than just engagement signals.

Should small SaaS companies invest in enterprise CRO platforms?

Only if traffic volume, experimentation cadence, and engineering resources justify it. Enterprise platforms often include advanced features such as server-side testing, feature flag management, and audience layering that smaller teams may not fully utilize. For early-stage SaaS, disciplined event tracking, qualitative research, and focused hypothesis testing typically produce stronger returns than expensive tooling. Tooling sophistication should follow operational maturity, not precede it.

How do you measure the true ROI of a CRO tool?

True ROI is measured through incremental profit, not just conversion rate lift. This requires connecting experiment or personalization exposure to revenue per visitor, contribution margin, and downstream retention. Controlled holdout groups or pre/post comparisons with traffic normalization are necessary to isolate impact. Without statistical isolation, tool performance may be confounded by seasonality, campaign changes, or channel mix shifts. For a practical guide on how to sell CRO ROI internally, framing it around incremental profit is the same logic that applies when evaluating tools.

Can multiple CRO tools conflict with each other?

Yes. Overlapping personalization layers, competing scripts, or duplicated audience logic can create inconsistent experiences and unreliable measurement. For example, running a client-side experimentation tool alongside a separate personalization engine without coordination can result in variant contamination. A clear hierarchy of control is required, along with defined ownership of data and experiment governance. Tool stacking without orchestration reduces clarity rather than improving performance.

Key takeaways

- A CRO tool is worth paying for only if it increases incremental profit, not just conversion rate or engagement metrics.

- Behavioral analytics is foundational in multi-step or multi-session funnels, but only when event tracking is disciplined and tied to revenue outcomes.

- Experimentation platforms justify their cost when traffic volume, statistical rigor, and experimentation velocity are high enough to produce reliable lift.

- Session intelligence tools are diagnostic, not causal. Their value depends on integration into a structured testing and prioritization framework.

- Personalization creates ROI when traffic is heterogeneous and intent varies significantly. It underperforms in homogeneous or low-volume environments.

- Many companies overpay due to tool overlap, premature infrastructure investment, or lack of governance, not because the tools themselves lack capability.

- The most defensible evaluation framework is simple: Does this tool measurably increase revenue per visitor without adding operational complexity that exceeds its lift?