At under 100k monthly visitors, the traditional CRO playbook breaks down. Classic split-testing tools like Optimizely and VWO cannot reach statistical significance inside a useful testing window, which means most of the advice written for enterprise retailers does not apply. The stack that actually works at this volume replaces statistical testing with signal extraction and AI-driven personalization. It is built around four layers: qualitative behavior tools (Microsoft Clarity or Hotjar), AI personalization engines that do not need significance thresholds (Pathmonk), intent capture and lead recovery (Klaviyo, OptiMonk), and on-site search (Klevu or Algolia).

Budget of $400 to $800 per month covers the full working stack for most sub-100k stores. Tools that charge per MTU, require enterprise contracts, or depend on A/B test volumes rarely earn their keep at this traffic level. Paying for Optimizely Web Experimentation on a store doing 30k monthly visitors is a defensible but expensive mistake. Paying for Clarity, Pathmonk, and Klaviyo is the stack that actually moves conversion rate at this volume. For the broader picture across all traffic tiers, see our full rundown of the CRO tools worth paying for.

Table of Contents

The average e-commerce store converts between 1.4% and 3%, with top performers above 5%. At 100k monthly visitors, a single-point conversion rate improvement is 1,000 additional orders a month. At 30k visitors, it is 300. The revenue math works fine at either volume. The testing math does not.

Most CRO advice online assumes you can reach statistical significance on a split test in under thirty days. With a 2% baseline conversion rate, detecting a 10% relative lift requires roughly 15,700 visitors per variant, or 31,400 total. A store doing 30k monthly visitors needs a full month of traffic at 100% exposure to power one two-variant test. If the true lift is smaller than 10%, the test never reaches significance inside a useful window at all.

This is the constraint the stack has to solve. Enterprise testing tools are a poor fit below 100k. Behavior analytics that explain why visitors bounce are valuable at any volume. Personalization engines that work at the individual visitor level sidestep the significance problem entirely by adapting content to each session instead of validating a winning variant across months of traffic.

What changes below 100k visitors is the method, not the ambition. You still need to improve conversion rate. You just cannot do it through the A/B testing loop that Amazon and Booking.com are famous for. This article covers what replaces it, which tools fit which tier, and which expensive mistakes to avoid. The central frame is what I’ll call the Significance Wall: the mathematical threshold below which split testing stops being a viable CRO method.

Why traditional A/B testing fails below 100k monthly visitors

At sub-100k traffic, the Significance Wall turns split testing into a coin-flip exercise dressed up as rigor.

Run the math. With an industry-average 2% conversion rate, detecting a 10% relative lift (conversion from 2.0% to 2.2%) requires roughly 15,700 visitors per variant at 95% confidence and 80% statistical power. Detecting a 5% relative lift requires about 62,000 per variant. For a 20% lift, 3,900 per variant is enough.

Translated into actual testing cadence:

- 20k monthly visitors: one two-variant test per month can detect a 20% lift, nothing smaller

- 50k monthly visitors: one test per month at 10% detection threshold, two tests at 20% threshold

- 100k monthly visitors: two to three tests per month at 10% threshold

Most real CRO improvements produce 3-15% lifts, not 20%+. Tests at the low end of that range are the ones worth running, and they are exactly the ones the Significance Wall blocks. Running them anyway produces two failure modes: either the test runs so long that external factors (season, campaign mix, traffic source shifts) invalidate the comparison, or the team calls a winner before significance and scales a false positive.

Microsoft research published by Ronny Kohavi found that even at Microsoft’s traffic scale, roughly one-third of controlled experiments produce positive results, one-third negative, one-third inconclusive. At enterprise traffic that is a known cost of experimentation. At sub-100k traffic it means the testing program produces almost no validated learning. CRO at low traffic is still worth doing, but the method has to change.

The secondary problem is pricing. VWO Testing starts around $199/month on Starter and climbs fast, AB Tasty is quote-based and typically lands above $1,500/month, Optimizely Web Experimentation starts around $36,000/year. Spending that on a tool that runs two or three tests per year is unjustifiable. Enterprise testing tools are priced against enterprise traffic volumes, and the pricing breaks down the same way the statistics do.

The conclusion is not that CRO does not work below 100k visitors. It is that split testing as the primary method does not work at that scale. This is not a new observation: the end of A/B testing as we know it has been visible in CRO practitioner data for years, and the sub-100k tier has felt it first. Reframing CRO around signal extraction and personalization reopens the category for stores that enterprise tools have effectively locked out. More on this in the latest innovations shaping CRO.

The four tool categories that actually work at low traffic

The working sub-100k stack has four layers, and each one compensates for a different gap that low traffic creates.

Each category serves a different cognitive job. You cannot substitute one for another, and removing any one of them creates a visible hole in the CRO program. For a broader category survey that goes wider than the four-layer model, see the top e-commerce conversion optimization tools.

Layer 1: Qualitative behavior tools (heatmaps, session replay, surveys)

What they do: show you what visitors actually do on the site. Click maps show where attention concentrates and where it misses. Session replay captures scroll behavior, hesitation, broken mobile interactions, and checkout drop-offs. On-site surveys capture the visitor’s own explanation of why they did not convert.

Why they matter more at low traffic: when you cannot test your way to insight, you have to watch your way there. The job of a session replay tool at 30k monthly visitors is to generate hypotheses that are worth acting on without a test, because you have seen the same failure pattern in five separate session recordings. Most of those hypotheses end up being UX problems rather than copy problems, which is why session replay is usually more useful than click maps at this stage.

Best tools by price band:

- Microsoft Clarity (free, unlimited pageviews). Click maps, session replay, dead clicks, rage clicks, scroll depth. No sampling. The obvious starting point.

- Hotjar ($32 to $171/month). Tighter UX than Clarity, better surveys, feedback widgets, funnel reporting. Worth paying for when Clarity’s filtering gets thin.

- FullStory (enterprise, quote-based). Overkill at sub-100k.

At this traffic level, Clarity plus occasional surveys is sufficient. Most teams should not pay for a heatmap tool until they have exhausted what Clarity shows them. Why heatmaps alone are not enough covers the gap that session replay leaves even when you use it well.

Layer 2: AI personalization engines

What they do: adapt the on-page experience to each visitor in real time based on behavior, source, and inferred intent. Instead of testing variant A against variant B and waiting for significance, the engine serves a different experience to each session and learns which ones convert across thousands of micro-decisions.

Why they matter more at low traffic: personalization does not require statistical significance on a page-level test. It works at the visitor level, so the learning loop closes in hours instead of months. For a store doing 30k monthly visitors, this is the single most consequential category in the stack.

Best tools by price band:

- Pathmonk (from $450/month for up to 20k monthly pageviews, scales by traffic). Intent-based microexperiences that adapt to each visitor’s stage (awareness, consideration, decision). Cookieless. Plug-and-play on Shopify, WooCommerce, Magento, Prestashop. Built around the sub-100k tier.

- Rebuy ($99 to $749/month). Product recommendations and upsell engine focused on Shopify. Strong for cart and post-purchase surfaces.

- Nosto (quote-based, typically $1,000+/month). Full personalization platform covering product recommendations, content personalization, and merchandising. Strong but generally over-scoped for sub-100k.

- Dynamic Yield (enterprise). Excellent but priced for 500k+ visitors and above.

Pathmonk and Rebuy cover different parts of the journey. Pathmonk handles awareness and consideration stage personalization through microexperiences. Rebuy specializes in cart and post-purchase cross-sells. They are complementary, not competitive, and most sub-100k Shopify stores benefit from running both. More on how website personalization drives higher sales, the role of AI in modern CRO, and why real-time personalization works in a cookieless environment. The underlying trend is hyper-personalization as the dominant direction for B2C and DTC marketing.

Layer 3: Intent capture and lead recovery

What they do: identify visitors showing buying intent who are about to leave, and recover them through email, SMS, or on-site capture. Abandoned cart flows, browse abandonment flows, exit-intent overlays, win-back flows. The underlying signal across all four is behavioral intent data, which is why most of these tools end up converging on similar trigger conditions.

Why they matter more at low traffic: conversion rate is only half the equation. At sub-100k traffic, the visitors you have are expensive per head. Recovering 20% of abandoned carts at 30k visitors is proportionally more valuable than recovering them at 300k, because the blended CAC per converted order is higher. Good email recovery is where $1 of spend returns $36 in e-commerce.

Best tools by price band:

- Pathmonk ($450+/month, scales with traffic). AI-powered website personalization. Identifies high-intent visitors in real time and adapts the experience to convert them before they leave. Effective at low traffic because the triggers fire at the individual session level, not the test level.

- Klaviyo ($20+/month, scales with contact list size). The default email and SMS platform for Shopify. Abandoned cart, browse abandonment, post-purchase, win-back flows.

- Attentive (enterprise, quote-based). SMS-focused. Typically only economic above $500k annual e-commerce revenue.

- Justuno ($39 to $399/month). Popup platform. Solid mid-tier alternative to OptiMonk.

On exit-intent specifically: the format has aged badly in how most stores use it, but it still works when triggered on intent signals rather than time-on-page. Most sub-100k stores are using exit popups wrong, firing them on every visitor instead of only on those showing abandonment signals. The most effective exit-intent strategy is not a popup at all.

Layer 4: On-site search and merchandising

What they do: improve how visitors find products inside the catalog. Typeahead, synonym handling, relevance ranking, no-result fallbacks, out-of-stock redirects, merchandising rules.

Why they matter more at low traffic: on-site searchers convert at 2-6x the site average according to Klevu and Algolia benchmark data. If 15% of your visitors use search and your search converts at 4%, you are leaving money on the table every time search returns zero results or puts the wrong product first. Sub-100k stores underinvest here because they assume their catalog is too small to need it. Usually that is wrong: the smaller the catalog, the more zero-result queries feel like dead ends.

Best tools by price band:

- Klevu ($79 to $449/month on Shopify). Machine-learning-based search built for product catalogs. Strong natural-language query handling.

- Algolia (from $500/month, usage-based). Enterprise-grade search. Overbuilt for most sub-100k stores.

- Searchanise ($19 to $299/month). Entry-level option for small Shopify stores.

- Shopify Search & Discovery (free, native). Baseline. Worth activating before paying for anything else.

Order of operations at low traffic: start with Shopify’s native search, measure zero-result rate and click-through. If zero-result rate exceeds 10%, upgrade to Searchanise or Klevu. The quality of search results matters most when they land the visitor on a well-structured product detail page that can close the sale on its own.

The sub-100k CRO stack by traffic tier

Different traffic bands need different stack compositions, and the right tool at 15k visitors is the wrong tool at 80k.

Below is the pragmatic recommendation by tier, built against actual tool costs and actual volume constraints. For a structural view of what every stack at any tier should deliver, the conversion rate optimization checklist is the right companion read.

10k to 30k monthly visitors (earliest stage)

- Behavior: Microsoft Clarity (free)

- Personalization: skip or start with Pathmonk Starter when approaching 20k

- Intent capture: Klaviyo (free under 250 contacts, then $20 to $45/month)

- Search: Shopify native or Searchanise ($19/month)

- Total: $0 to $150/month

Priority at this tier is qualitative learning and basic email flows. Do not pay for tools that need traffic to work. Put the budget into building a working abandoned-cart and welcome flow in Klaviyo.

30k to 60k monthly visitors (growth tier)

- Behavior: Clarity (free) + Hotjar Plus ($32/month)

- Personalization: Pathmonk Growth ($450/month, scales with pageviews)

- Intent capture: Klaviyo ($45 to $150/month) + OptiMonk ($39/month)

- Search: Klevu ($79/month) or Searchanise ($99/month)

- Total: $640 to $850/month

This is the tier where personalization pays off hardest. It replaces the A/B tests you cannot run. It is also where the cost of ignoring conversion rate starts to compound.

60k to 100k monthly visitors (scale-ready tier)

- Behavior: Hotjar Business ($80/month) + Clarity

- Personalization: Pathmonk Growth or Growth+ ($450 to $900/month depending on pageview band)

- Intent capture: Klaviyo ($150 to $400/month) + Pathmonk, consider Attentive for SMS if revenue above $500k annual

- Search: Klevu ($249/month)

- Total: $1,100 to $1,700/month

At this tier, occasional A/B testing becomes defensible (two to three tests per month on high-impact pages like PDP or checkout). Adding VWO or Convert is justifiable, but still not the backbone of the program. The backbone is still personalization plus qualitative research.

Increase e-commerce sales with personalized experiences

- Show the right products at the right moment

- Reduce cart abandonment with intent-based nudges

- Turn anonymous visitors into confident buyers

Common mistakes when building the sub-100k stack

Most sub-100k stores are not held back by budget, they are held back by buying the wrong tools for their traffic volume.

Mistake 1: Buying enterprise testing tools at sub-100k traffic.

Paying $1,500/month for AB Tasty or $36,000/year for Optimizely at 40k monthly visitors is the most expensive common error. The tools are excellent. Your traffic cannot feed them. Switch the budget to personalization, search, or email and you will see more lift.

Mistake 2: Running A/B tests without pre-calculating the MDE.

Minimum detectable effect (MDE) is a function of baseline conversion rate, traffic per variant, desired confidence, and desired power. Calculate it before starting the test using Evan Miller’s calculator or equivalent. If the MDE comes out above 25-30%, the test is not worth running. You will either call a false positive or never reach significance. The one exception is pricing split testing, where the expected lift is usually large enough to clear the threshold even at low traffic.

Mistake 3: Paying for personalization tools that need manual variant creation.

Some platforms like Mutiny and Dynamic Yield require the marketing team to create variant content for each experience. At low traffic, the labor cost of producing those variants outruns the value they generate. AI-driven tools that generate experiences automatically from existing site content (Pathmonk, Nosto) match small-team labor constraints better.

Mistake 4: Treating heatmaps as the answer instead of the question.

Heatmaps generate hypotheses, not conclusions. A click map showing 40% of visitors clicking on a non-clickable element is a prompt to change the element, not evidence that changing it will lift conversion. Pair qualitative tools with personalization engines that can act on the hypothesis at the visitor level.

Mistake 5: Stacking three or four popup tools.

Privy plus Justuno plus OptiMonk plus Klaviyo popups is a common accidental stack in Shopify stores. The tools overlap heavily and end up firing competing overlays at the same visitor. Pick one popup platform and one email/SMS platform. Overlap creates UX clutter that lowers conversion rate.

Mistake 6: Skipping the measurement layer.

GA4 plus Shopify’s native conversion pixel is the minimum measurement layer. Without clean baseline tracking, none of the tools above produce attributable results. The setup cost is zero dollars and a few hours of real work. Conversion attribution in a cookieless environment is a related problem that becomes more pressing each quarter as third-party cookie deprecation continues. If the measurement layer is unclear, start with a structured CRO audit before adding any new tool.

Mistake 7: Ignoring mobile.

Most sub-100k stores get 60%+ of traffic from mobile and 30-40% of revenue from mobile. The conversion rate gap between desktop and mobile is usually two to three percentage points. If you are not prioritizing mobile-specific UX in your CRO program, the stack will underperform regardless of tool choice. Proven mobile conversion rate strategies is the starting reference.

How Pathmonk helps e-commerce stores below 100k visitors convert more without running A/B tests

Pathmonk’s architecture is built directly against the constraint this article describes: it works at the visitor level, so it does not need the traffic volume an A/B test requires.

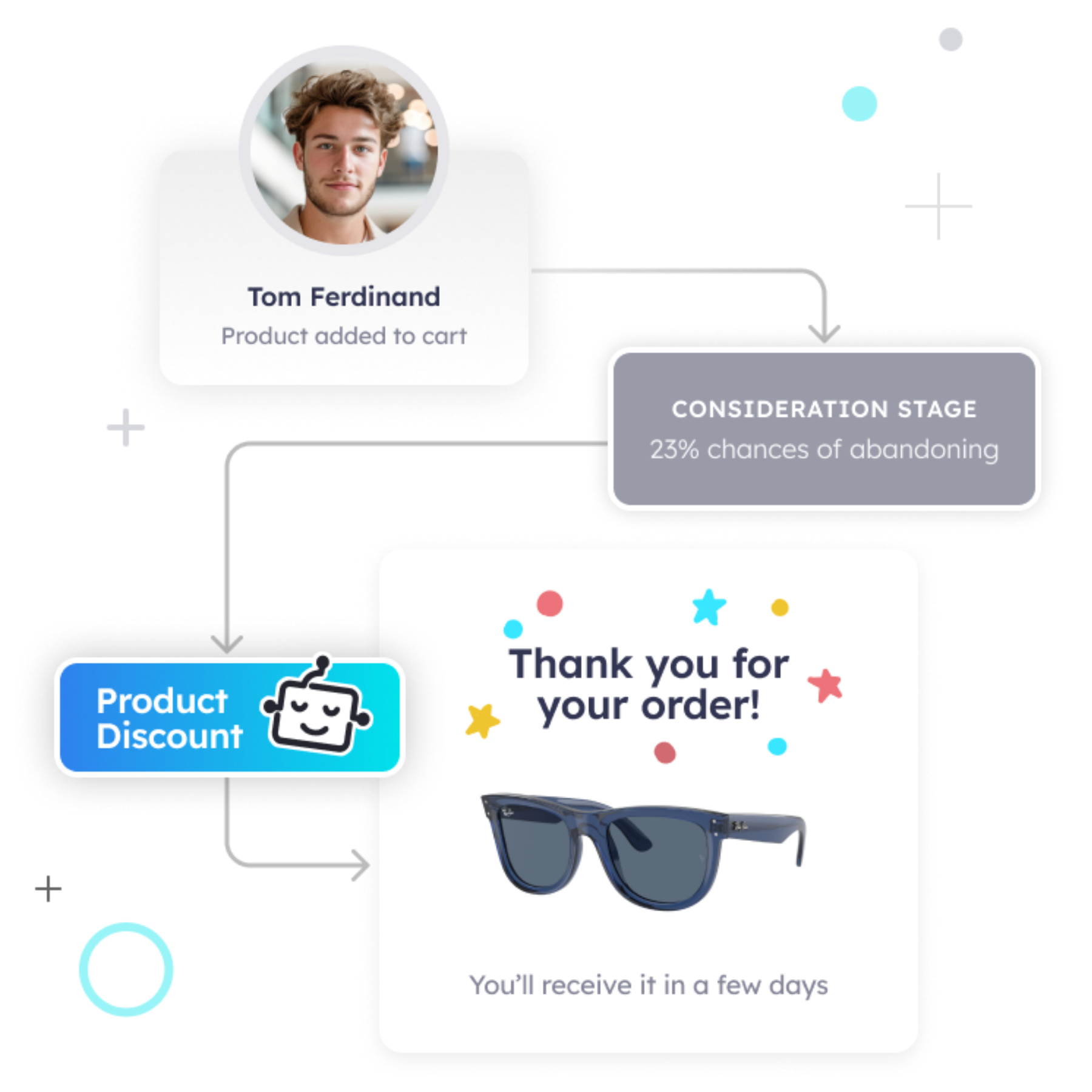

Instead of running split tests that need statistical significance on page-level variants, Pathmonk operates through three mechanisms: intent profiling, microexperiences, and self-optimization.

- Intent profiling uses behavioral fingerprinting to classify each visitor in real time into one of three buying stages (awareness, consideration, decision) based on signals like session depth, referrer, on-page behavior, and scroll patterns. The classification does not require cookies, which means it keeps working regardless of browser privacy changes. The result is that every visitor arrives at the site with an inferred intent stage already attached to their session. This is the technical foundation described in how Pathmonk detects the stage of the customer journey.

- Microexperiences are the intervention layer. Based on intent stage and behavioral signals, Pathmonk triggers targeted on-page experiences: stage-appropriate explainer videos for awareness visitors, social proof and reviews for consideration visitors, interactive product quizzes for high-intent visitors struggling with choice, or incentive-based offers at decision stage. The conversion goal is always the same for every visitor. What changes is the supporting content that gets each visitor there. The underlying design concept is the micro-moment, a behavioral window in which a visitor is most likely to respond to a targeted intervention. More on what a microexperience is.

- Self-optimization replaces the A/B testing loop. Pathmonk runs a 50/50 traffic split by default: 50% of visitors see microexperiences, 50% see the unchanged site. Conversion uplift is reported with confidence intervals. Once the uplift is validated, the customer can manually scale exposure from 50% up to 95% traffic exposure, keeping a 5% control group for ongoing measurement. This gives teams the rigor of a controlled experiment without requiring them to design variants, wait months for significance, or run separate tests per surface. The statistical method is documented in detail in Pathmonk’s statistics guide.

The business math is straightforward. A 30k/month store with a 2% baseline that gets a validated 25% uplift from Pathmonk ($450/month) produces roughly 150 additional orders per month. At an $80 AOV, that is $12,000 in incremental monthly revenue against a $450 cost, for a payback period inside two weeks. The math works at smaller traffic volumes because the mechanism operates at the visitor level, not the aggregate level.

Pathmonk is a Shopify Certified Personalization Partner and integrates natively with Shopify, Magento, WooCommerce, and Prestashop. Installation runs through a plug-and-play snippet or Google Tag Manager. The default content library is generated automatically from the existing site, so there is no new content production overhead.

Calculate your increased profit in just a few clicks

Easily find out how many conversions and revenue you can expect once Pathmonk is installed on your website.

Calculate your gains

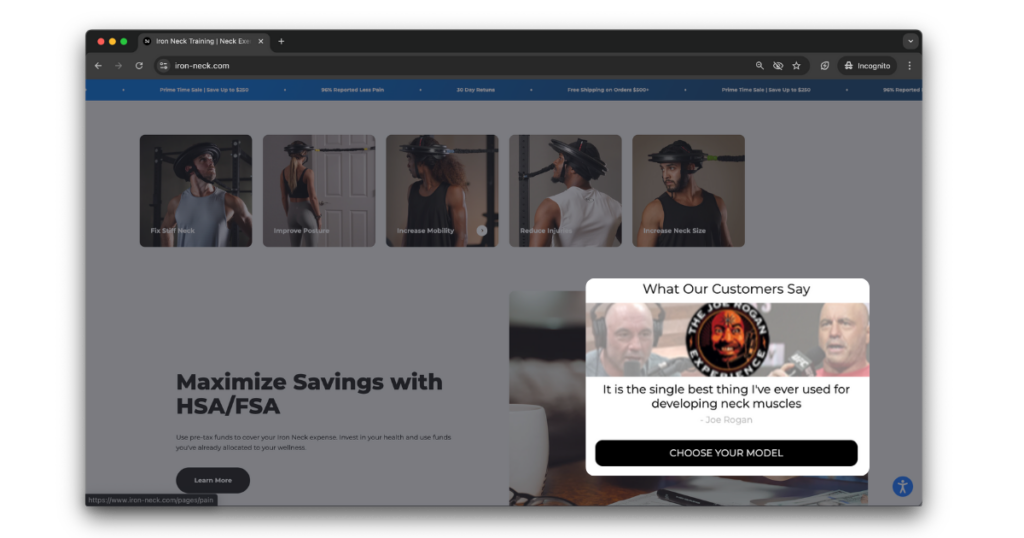

How Iron Neck increased e-commerce sales 26% in under four weeks

Iron Neck is a DTC fitness equipment brand selling isometric neck strengtheners to athletes, physical therapists, and combat sports practitioners. The company was founded in 2012 by former UCLA football player Mike Jolly after published research on neck strength and concussion prevention. Traffic to the site sits in the sub-100k tier typical of specialist DTC brands, which ruled out any testing program that depends on enterprise traffic volume.

The core conversion problem was decision friction across a multi-variant product line:

- Visitors arrived researching neck strength training without prior category knowledge

- The catalog presented multiple model variants without clear guidance on use-case fit

- Marketing Manager Sam Kuhn identified through qualitative analysis that most visitors were self-researching and leaving without confidence in their product choice

- Standard A/B testing on the PDP or quiz flow would have taken months to reach significance at their traffic volume

The underlying insight was that the catalog’s complexity exceeded what a static product page could explain to a cold visitor.

Iron Neck deployed Pathmonk to run stage-aligned microexperiences across the site. Awareness-stage visitors received short explainer videos, consideration-stage visitors saw social proof in the form of testimonials and customer reviews, and decision-stage visitors showing hesitation were routed through a short quiz that recommended the best-fit Iron Neck variant based on training goals.

- 26% lift in website conversion rate in under four weeks

- Measurable reduction in product-match errors (fewer exchanges on wrong-model purchases)

- Higher average session engagement across all funnel stages

The mechanism worked because Iron Neck did not need to A/B test their way to these gains: Pathmonk’s 50/50 split validated the uplift inside the four-week window, after which exposure was scaled to the majority of traffic.

FAQs on e-commerce optimization

What is the minimum traffic needed for A/B testing in e-commerce?

For a 2% baseline conversion rate, detecting a 10% relative lift requires roughly 31,000 visitors across both variants at 95% confidence and 80% power. That translates to 100k+ monthly visitors for regular testing cadence. Below 100k, detection thresholds climb fast: at 30k monthly visitors, only 20%+ lifts are reliably detectable inside a one-month window. Use Evan Miller’s calculator before launching any test, and read the full CRO testing guide before designing a program.

Can Pathmonk replace A/B testing at low traffic?

Pathmonk replaces the most common use of A/B testing (which variant converts better) by adapting content to each visitor individually. It still runs a 50/50 control split to measure uplift, so teams get validated results without the traffic volume a classic test requires. It does not replace strategic testing on large structural changes like a full checkout redesign.

Should I use a tool priced per monthly tracked user (MTU) at low traffic?

Avoid MTU-priced tools below 100k traffic. Fixed fees dominate the bill at the low end and the per-user economics do not work until you have scale. Pageview-based pricing (Pathmonk) or contact-based pricing (Klaviyo) is a better fit for the sub-100k tier.

How do on-site search tools differ from product recommendation tools?

Search tools improve how visitors find products through explicit query input. Product recommendation tools surface products based on browsing behavior without requiring a query. At low traffic both matter, but fixing search is usually higher-leverage because poor search returns zero-result pages that bounce visitors immediately, whereas poor recommendations fail silently.

What about attribution? How do I know which tool in the stack caused the lift?

Use a 50/50 holdout wherever the tool supports it. Pathmonk enforces this by default. For tools that do not, run a clean before/after comparison over a matched time window, controlled for seasonality and traffic source mix. GA4 alone is weak at tool-level attribution because of modeled data and cookie loss, so lean on platform-native reporting and in-tool holdouts.

Does Shopify Magic or built-in Shopify AI replace the need for external personalization tools?

No. Shopify’s native AI handles product recommendation blocks and basic cross-sells. It does not classify visitor intent, personalize page content by buying stage, trigger interactive quizzes, or run microexperiences conditional on behavior. The functional overlap with dedicated tools like Pathmonk or Rebuy is small.

Are exit-intent popups still worth using in 2026?

Yes, but only when triggered on specific intent signals rather than mouse-movement or time-on-page alone. Used that way, exit-intent captures 2 to 6% of would-be bouncers. Used as a blanket overlay on every visitor, it lowers conversion rate more often than it raises it.

How long should I run the sub-100k stack before evaluating results?

Give behavior tools (Clarity, Hotjar) two weeks to collect meaningful sessions before drawing conclusions. Give personalization tools (Pathmonk) four weeks to clear a 50/50 test at sub-100k volume. Give email and SMS flows (Klaviyo) one full purchase cycle plus thirty days to see cohort-level results.

When does it make sense to graduate to a full A/B testing tool like VWO or Convert?

Around 100k monthly visitors, when you can run two to three tests per month at a 10% MDE. Below that threshold, the testing tool will sit idle between tests and the cost per validated learning is high. Personalization engines like Pathmonk remain in the stack even after you cross 100k because they work on a different part of the problem.

Key takeaways

- At sub-100k monthly visitors, traditional A/B testing fails because the math does not support detection of realistic 3-15% lifts inside a useful testing window. This is the Significance Wall.

- The working sub-100k stack has four layers: qualitative behavior, AI personalization, intent capture and lead recovery, and on-site search.

- Microsoft Clarity (free) plus Pathmonk (from $450/month) plus Klaviyo ($20 to $150/month) plus Klevu or Searchanise covers the core stack for most sub-100k stores at under $800/month total.

- AI-driven personalization sidesteps the Significance Wall by adapting to individual visitors rather than validating page-level variants through months of split tests.

- Enterprise testing tools (Optimizely, AB Tasty) are structurally misfit below 100k visitors regardless of budget.

- Exit-intent, email recovery, and on-site search matter more at low traffic because each recovered visitor carries a higher proportional value against blended acquisition cost.

- Pre-calculate MDE before any test, avoid MTU pricing, pick one popup tool, and do not let the heatmap replace the hypothesis.