AI lets you optimize for multiple conversion goals simultaneously by scoring each visitor’s intent in real time and routing them to the goal with the highest expected value for that specific session. Instead of running separate A/B tests for every goal, which forces each test to compete for the same finite traffic, AI treats your conversion goals as a portfolio: it assigns each visitor to the goal they are most likely to complete and that matters most to your pipeline economics.

The practical shift is from “pick the best variant for the average visitor” to “pick the best goal for this visitor.” That requires three things: intent classification at the visitor level, goal ranking by expected value, and a decision layer that matches one to the other without manual segmentation rules. Static A/B tests cannot do this. Rule-based personalization engines approximate it but collapse past two or three segments.

Table of Contents

Baymard Institute’s research across 327 top-grossing e-commerce sites consistently finds that most websites expose visitors to multiple simultaneous conversion paths: newsletter signup, free shipping offer, product detail, add to cart, checkout, and in B2B variants, demo booking, trial start, gated content, and contact sales. The average site could increase conversion rates by 35.26% through checkout design improvements alone, and most sites never measure the cross-goal interaction effects that this optimization creates.

The problem is not that these goals exist. It is that most CRO programs treat them as if they should all be optimized independently, on the same traffic, using the same statistical frame. When every goal is optimized in isolation, the highest-value goal (usually demo or checkout) cannibalizes the medium-value goals (trials, newsletter, content downloads), and low-intent visitors who would have converted on those medium goals leave with nothing.

What has changed in the past 18 months is that the cost of computing real-time intent has collapsed. Browser-side behavioral fingerprinting, transformer-based session classifiers, and server-side identity resolution are cheap enough to score intent on every pageview without a marketing ops ticket. Multi-goal optimization is now tractable outside of Amazon, Booking.com, and a handful of platforms with in-house data science teams.

This article covers what AI-driven multi-goal optimization looks like mechanically, how to structure the expected-value math, where implementations fail, and when you should not bother.

Why the multi-goal problem breaks traditional CRO

Traditional CRO is built on a single-goal frame. You pick a conversion goal (demo booked, purchase completed, trial started), run an A/B test on some element (headline, CTA, form), and declare a winner when the variant reaches 95% confidence. That works when there is exactly one goal that matters.

The moment there are two or more goals with meaningfully different values and different visitor fits, the math falls apart. Optimizing for demo-booked can reduce newsletter signups by 30% to 50% without anyone noticing, because the test never measured it.

A concrete example. Say your site has three goals:

- Book a demo (value per conversion: $8,000 in weighted pipeline credit)

- Start free trial (value: $600 expected ARR)

- Download gated content (value: $45 MQL credit)

If 100 visitors land on a page and you optimize the hero CTA for “Book a demo,” you might push demo-booked from 1.2% to 1.8%. That looks like a win. What the test does not see:

- 15 trial-ready visitors who would have clicked “Start trial” now bounce because the hero is pointing them at a sales call they are not ready for

- 40 research-stage visitors who would have downloaded the guide leave with nothing

- Expected revenue per 100 visitors: $144 before, $177 after demo push, but $210 under balanced routing

This is the goal cannibalization problem. Single-goal A/B testing systematically understates it because it only measures the goal being tested. The lift is real on paper. The pipeline is worse.

There is a second failure mode that compounds the first: statistical power. If your site gets 30,000 monthly visitors and you want to detect a 15% relative lift at 95% confidence on a 2% baseline conversion rate, you need roughly 16,000 visitors per variant. Running separate tests for four goals sequentially means four months of testing per element. Most marketing teams do not have four months. So they either test fewer goals or run tests simultaneously and accept the confounded results.

AI changes the frame because it does not need to isolate goals. It can compute expected value across all goals in the same session and route accordingly.

Introducing Conversion Portfolio Optimization

Conversion Portfolio Optimization (CPO) treats your full set of conversion goals as a portfolio and allocates each visitor to the goal with the highest expected value for that specific visitor-session pair. It borrows from financial portfolio theory: instead of picking the single best asset, you optimize the weighted return across the full basket conditional on the state of the world. It is the logical extension of hyper-personalization, which segments every visitor but usually stops short of treating every goal as a routing decision.

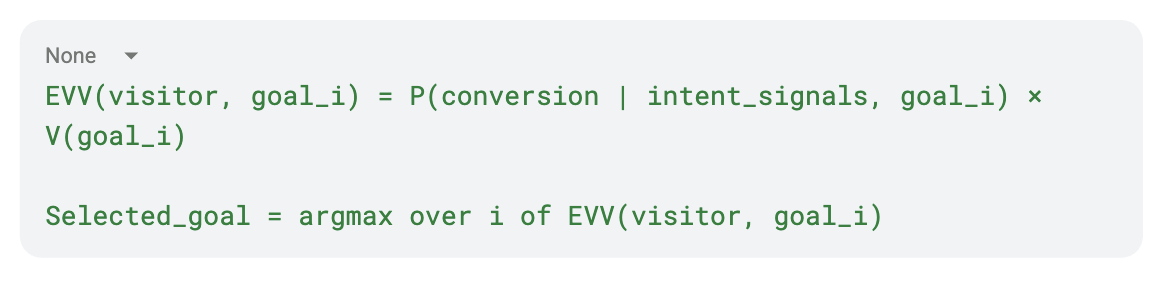

The core formula:

Where V(goal_i) is the monetary value of conversion on goal i, and P(conversion | intent_signals, goal_i) is the model’s probability that this visitor converts on goal i given what you have observed about them.

This reframes the problem from “which variant wins” to “which goal wins for this visitor.” The portfolio layer sits above individual variant tests. Variant tests still run, but they run in parallel per goal, and the traffic allocator decides who sees which test at all.

Three implications follow.

First, goal value has to be quantified. If you cannot attach an expected dollar value to each goal, you cannot rank them. That means working backwards from pipeline: MQL → SQL conversion rate × SQL → Close rate × Average deal size, then discounted by time-to-close. A content download at the awareness stage might be worth $30. A demo booking at the decision stage might be worth $4,000. If the model does not see those numbers it will optimize for volume, not revenue.

Second, intent signals have to be measurable and predictive. Scroll depth alone is not enough. The signals that actually predict goal-specific conversion include entry page type (pricing vs. blog vs. homepage), referrer intent class (branded search, non-branded search, paid, direct), session depth, time on high-intent pages, prior visits, and firmographic match where available. The model needs a training set of converted sessions by goal to learn the weights. Behavioral data is the substrate.

Third, the system has to act. Scoring a visitor and then routing them through the same generic experience defeats the purpose. The output of the model has to feed a rendering layer that changes the on-page experience: a microexperience, a dynamic CTA swap, a modal trigger, a journey redirect.

Without all three, you have analytics, not optimization.

Get your website’s conversion score in minutes

- Instant CRO performance score

- Friction and intent issues detected automatically

- Free report with clear next steps

How AI computes expected value per visitor in real time

The mechanics break into four stages that run on every session.

Stage 1: Signal capture. The page fires a JavaScript SDK that collects behavioral events (scroll, click patterns, dwell time, copy-paste, hesitation on forms), entry context (URL, referrer, UTM), and inferred firmographic data (company domain for B2B, geo, device class). Modern implementations do this cookielessly using browser-side fingerprinting tied to a server-side identity graph. Cookieless methods are now structurally preferable because cookie-based tracking loses 30% to 60% of signal in consent-fatigued EU audiences.

Stage 2: Intent classification. A model (typically a gradient-boosted tree ensemble or a transformer trained on session sequences) maps the signal vector to a probability distribution over buying journey stages: awareness, consideration, decision. Some systems expose these as continuous scores. Good systems update the classification every time new signals arrive within the session.

Stage 3: Goal-conditional probability. For each goal in the portfolio, a separate model head estimates the probability of conversion conditional on the intent class and the specific goal. A decision-stage visitor has a high P(demo | decision) but a low P(content download | decision) because decision-stage visitors do not download awareness-stage material.

Stage 4: Expected value routing. Multiply probability by goal value, take the argmax, and serve the matching experience. If EVV(demo) > EVV(trial) > EVV(download), show a demo-booking microexperience. If the visitor’s intent is awareness-stage and the top goal becomes “download,” show a soft content offer instead.

The performance gain over rule-based personalization comes from three places: the model finds interactions humans miss (mobile users from organic with session depth > 3 convert on trial at 4x baseline), it updates in real time rather than on a session snapshot, and it requires no manual segment maintenance.

One non-obvious detail: the model has to optimize against a reserved control group to know whether it is actually improving outcomes. Without a holdout, you cannot distinguish genuine lift from model noise, and the system will drift. A 5% untouched control group is the floor for honest measurement.

Implementation: the five-layer stack

A working CPO implementation requires five layers, each of which can fail on its own.

1. Goal definition and value assignment

List every conversion goal on your site. Assign a dollar value. Be honest about it. If you have three MQL paths (demo, trial, content), their values should reflect downstream conversion rates, not wishful thinking. See what counts as a conversion goal and how to structure secondary conversion goals before you start.

Common mistakes here:

- Assigning equal value to all goals “for simplicity.” The whole point of the portfolio approach is that goals have different values.

- Ignoring time-to-close. A demo that converts to closed-won in 45 days is worth less today than one that converts in 15, even if the contract size is the same. Apply a discount factor.

- Forgetting secondary goals. Newsletter signups and PDF downloads are not zero-value. They feed nurture sequences. Assign them something even if it is $5.

2. Signal instrumentation

You need behavioral events beyond pageviews. Minimum set: scroll depth bucketed in quartiles, click patterns on CTAs, form field focus duration, dwell time on pricing and feature pages, session count (is this their first visit or their fourth?), and referrer classification.

Tooling constraints: GA4 alone is insufficient because it is aggregate and sampled. You need a session-level event stream. Segment, RudderStack, or a purpose-built SDK are the three realistic options. GA4 attribution can sit alongside this as a reporting layer but not as the decision substrate.

3. Intent model training

If you are building in-house, you need 10,000+ labeled sessions per goal as a starting training set. Labels come from conversion outcomes (converted on goal X / did not). A decision tree ensemble will outperform logistic regression by 15% to 25% on F1. Transformer-based sequence models outperform trees by another 10% but require more infrastructure.

If you are using a vendor, the model is pre-trained across their customer base and fine-tunes to your data within the first 14 to 21 days of deployment.

4. Decision and rendering layer

The model outputs a goal recommendation. Something has to use it. That means microexperiences, dynamic CTAs, modal triggers, or dedicated landing pages that can be invoked in real time. This is where most in-house implementations stall: the data science team has a working model, marketing has no way to operationalize it.

5. Measurement and control

A/B split with a true control group. 50/50 at launch is standard. Once the model is hitting confidence thresholds (95% on primary KPI for at least 14 days of stable traffic), you can escalate to 80/20 or 95/5 in favor of the optimized experience. The control group never goes to zero. See why A/B discipline matters specifically here.

Common failure modes

- Over-optimizing for the highest-value goal. Without a proper portfolio frame, the model funnels everyone toward demo-book because demo has the highest value. Demo rate rises 40%. Trial rate falls 70%. Total pipeline drops. The fix is constraining the optimizer with a minimum viable rate on secondary goals, or using a value function that penalizes zero-conversion sessions.

- Poor goal value estimates. If you guess at V(goal), the optimizer inherits your guess. Garbage in, garbage out. A tight CPO implementation needs good pipeline economics before it can run. If your MQL-to-SQL rate is unknown, measure it first.

- Insufficient signal depth. A model trained only on pageview count and time-on-site will not outperform a static rule. Minimum useful feature set is 20 to 40 behavioral variables per session, also the floor for diagnosing why visitors don’t convert.

- Ignoring user context fit. A hyper-optimized demo CTA shown to a student doing homework research at 2 AM is not going to convert. Good models include entry-point context, micro-moments, and bounce probability as features, not just value maximization.

- Confusing intent score with fit score. Intent tells you where in the journey the visitor is. Firmographic fit tells you whether you want them at all. For B2B sites, a high-intent visitor from a company outside your ICP is worth less than a moderate-intent visitor who matches. Both scores need to be in the EVV calculation, which means you also need to identify which companies are visiting.

- Running without control group discipline. A model that cannot be measured cannot be improved. Vendors promising optimization without a holdout are selling a black box.

When Conversion Portfolio Optimization does not work

CPO is not universally applicable. Four situations where it fails.

Very low traffic sites. If you are under 10,000 monthly visitors, the model has insufficient data to learn stable patterns. Better to run focused single-goal tests until you have volume.

Single dominant goal by a wide margin. If 95% of your site’s value comes from one goal and secondary goals are noise, the portfolio overhead is not worth it. Optimize the dominant goal.

No measurable value differentiation. If every conversion on your site drops into the same CRM bucket with no downstream measurement, you cannot assign different values. Fix the attribution chain first.

High regulatory constraints on routing. Financial services, healthcare, and some regulated B2B verticals have rules about equal treatment of visitors or required disclosures that constrain dynamic experience routing. CPO still works but the variant space narrows.

How Pathmonk lets you optimize for multiple conversion goals without rebuilding your site

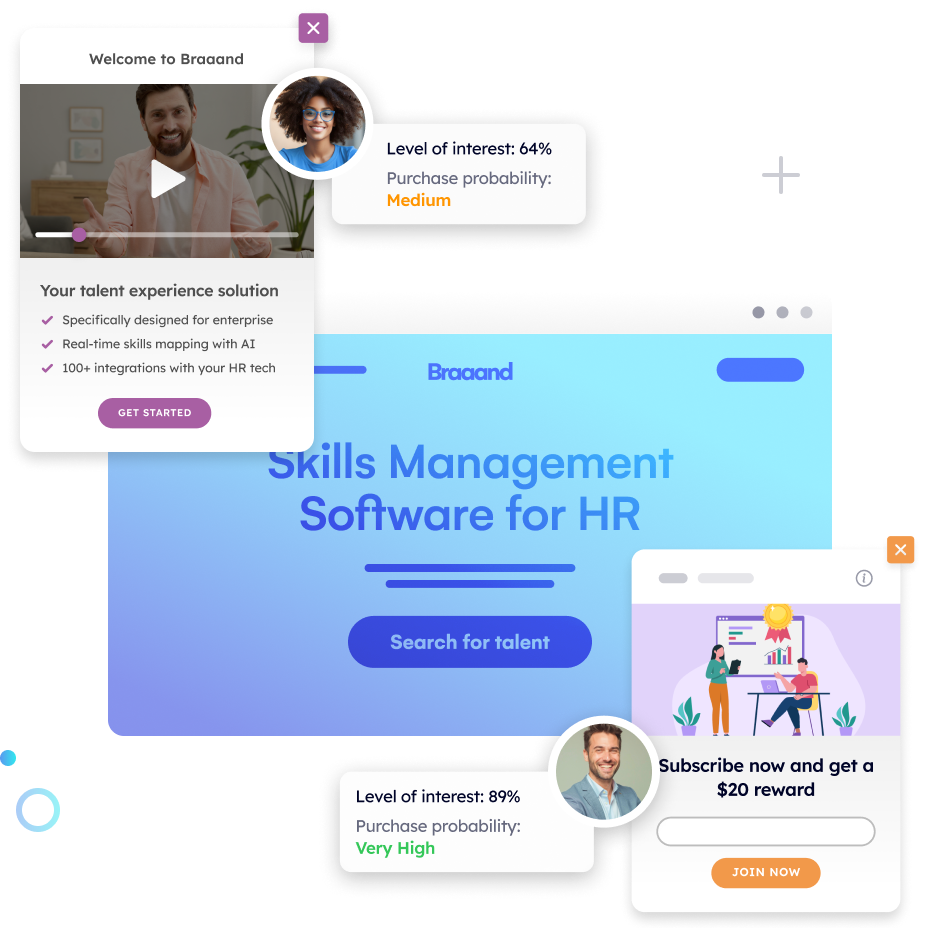

Pathmonk runs as an intelligent layer on top of your existing site rather than replacing or redesigning it. Its AI classifies every visitor into one of three buying journey stages (awareness, consideration, decision) using 200+ behavioral signals captured through a cookieless fingerprint, then triggers the microexperience most likely to move that visitor toward a conversion goal selected from a portfolio of goals you define.

The mechanics map to the CPO stack directly. You define multiple conversion goals in the dashboard, one primary and up to several secondaries such as demo-book, trial-start, content-download, or newsletter signup, and Pathmonk assigns each visitor-session to the goal with the highest modeled expected value. Awareness-stage visitors see soft offers like content recommendations or video explainers. Consideration-stage visitors see comparison prompts, feature-specific CTAs, or qualification flows. Decision-stage visitors see direct demo-booking or checkout microexperiences. The system runs against a reserved 5% control group by default, so the lift is measurable against a clean baseline.

Two things make this practical in ways a custom build typically is not. First, the AI self-optimizes without manual segment maintenance: the model ingests every converted session as a training signal and updates continuously, so adding a new goal does not require rebuilding segmentation rules. Second, the rendering layer is already built. Microexperiences are pre-templated components that can be customized to your brand, so you do not need engineering cycles to operationalize a new goal. Multiple goals live side by side on the same site, and the traffic allocator decides which visitor sees which.

For teams that want to see how many visitors are reaching each goal and where drop-offs happen, the buying journey report surfaces the full funnel by stage, and the revenue report shows weighted conversion value across the portfolio. The A/B framework keeps a 50/50 split until the lift reaches statistical significance, then you can manually escalate traffic exposure up to 95% of visitors seeing the optimized experience while holding the 5% control steady.

The result: multiple goals optimized on the same traffic, with a measurable lift against the pre-Pathmonk baseline, and without a data-science team or a site rebuild.

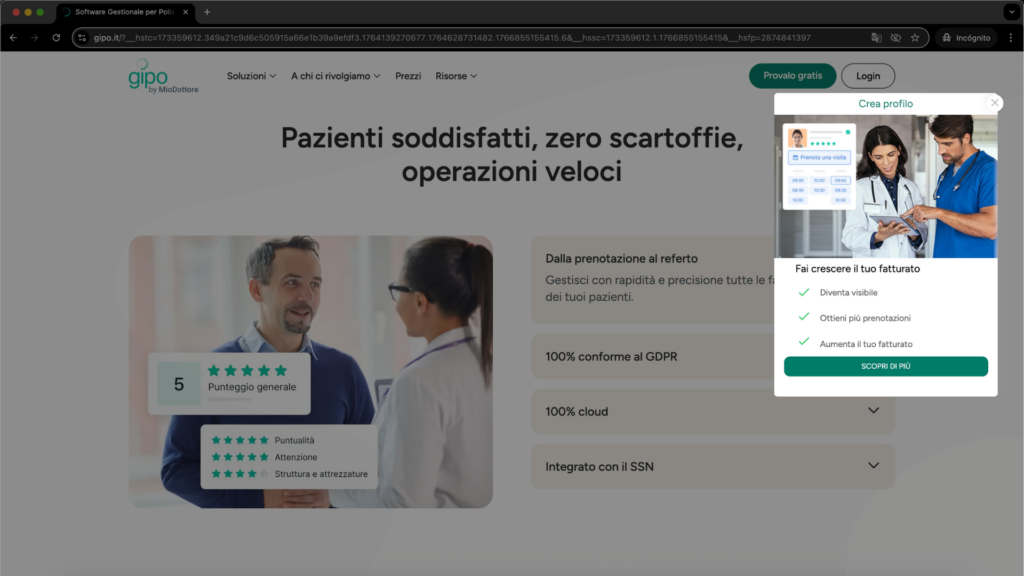

Case study: how Doctoralia lifted conversions by 82% across three markets without separate tests per goal

Doctoralia is a health-tech platform connecting patients with medical professionals. It operates across multiple markets, each with distinct language, visitor behavior, and conversion goal priorities.

The challenge was operationally brutal. Running separate CRO programs per market and per goal would have required a dedicated testing calendar, separate traffic allocation per test, and a multi-month runway to reach significance. With three markets and multiple conversion goals per market, the testing matrix exceeded what the team could execute in a reasonable timeframe.

Doctoralia deployed Pathmonk across all three markets in parallel. The AI classified visitor intent per session, routed each visitor to the highest expected-value goal for their stage and market, and ran A/B against the original with a 5% reserved control.

- +82% average conversion uplift across the three markets

- 2 weeks to statistical significance on primary conversion goals

- Zero separate market-specific test cycles: the model adapted to market-specific behavior patterns automatically

The uplift was durable across all conversion surfaces, meaning the system was not cannibalizing one goal for another. It was genuinely expanding total conversions.

Increase +180% conversions from your website with AI

Get more conversions from your existing traffic by delivering personalized experiences in real time.

- Adapt your website to each visitor’s intent automatically

- Increase conversions without redesigns or dev work

- Turn anonymous traffic into revenue at scale

FAQ on conversion goal optimization

Does multi-goal AI optimization require a data science team?

If you are building in-house, yes: someone has to train and maintain the intent model, manage the feature pipeline, and validate the expected value math. If you use a vendor like Pathmonk with a pre-trained model and rendering layer, a single marketing ops owner is enough.

How is this different from standard website personalization?

Standard personalization is rule-based: if visitor matches segment X, show variant Y. Multi-goal AI optimization computes expected value per goal per visitor in real time and routes accordingly. Rule-based systems collapse when segments multiply. Probabilistic models scale to any number of goals without combinatorial explosion in rules.

Can I run this on top of Google Optimize or my existing A/B testing tool?

Google Optimize was sunset in September 2023. Most replacement tools (VWO, AB Tasty, Optimizely) are single-goal-centric and do not natively compute expected value routing across multiple goals. You can build this on top of them if you own the experimentation framework, but most teams find it simpler to use a purpose-built platform.

How much traffic do I need before AI-based multi-goal optimization is worth it?

Minimum viable traffic is around 10,000 monthly visitors, assuming 3 to 4 goals and realistic baseline conversion rates of 1% to 3%. Below that threshold, model updates are too slow and variance overwhelms signal. Above 50,000 monthly visitors, the lift is typically larger and more stable.

What happens if two goals have equal expected value for the same visitor?

The optimizer picks one deterministically (usually the higher-value goal, or by tie-breaker) or probabilistically (sampling by EVV weight). Well-designed systems log ties for review, since they often signal missing features or goal overlap.

How do I prevent high-value goals from crowding out low-value ones?

Two approaches. The first is to set minimum conversion rate floors per goal that the optimizer cannot push below. The second is to cap the traffic share any single goal can receive. Both are configurations in the optimizer, not algorithmic changes. Good platforms expose these as controls.

Does this work for e-commerce sites with product-level optimization?

Yes, but the goal definition shifts. Goals become product categories or checkout paths rather than distinct actions. The expected value is typically average order value by category weighted by conversion probability. The mechanics are the same, but the portfolio is structured around SKU groupings.

How does GDPR or privacy regulation affect intent scoring?

Cookieless fingerprinting can operate without consent under most GDPR interpretations because it does not store personally identifiable information. Confirm with your DPO. Consent-gated approaches lose 30% to 60% of intent signal in consent-fatigued EU audiences, so cookieless is structurally preferable.

Can I integrate expected value routing with my CRM lead scoring?

Yes, and you should. The intent score from the website becomes an input feature for CRM lead scoring, and the CRM closed-won data becomes a training signal for the website model. The loop closes when inbound leads arrive with intent and firmographic context attached.

What is the typical lift from single-goal A/B testing to multi-goal AI optimization?

Published Pathmonk cases range from around 80% to 280% lift on primary conversion. Secondary goals often see larger relative lifts because they were previously ignored. Actual lift depends on baseline rate, traffic volume, and portfolio complexity.

Key takeaways

- Most websites have 3 to 11 conversion goals, and single-goal A/B testing systematically mismeasures the cross-goal impact of any change

- Conversion Portfolio Optimization assigns each visitor to the highest expected-value goal using EVV = P(conversion | intent) × V(goal) as the decision rule

- Real-time intent scoring requires behavioral signal capture beyond pageviews, a trained classifier, goal-value estimates, and a rendering layer that can act on the output

- Minimum viable traffic is around 10,000 monthly visitors. Below that, focus on single-goal tests first

- A 5% reserved control group is the floor for honest measurement. Without it, lift is unverifiable

- Common failure modes include over-optimizing for high-value goals (goal cannibalization), poor value estimates, insufficient signal depth, and ignoring firmographic fit

- Pathmonk operates as an AI layer on top of an existing site, running multi-goal portfolio optimization without a rebuild or a data science team