AI decides which content to show each visitor by reading their behavior in real time, classifying their position in the buying journey, and serving the supporting content most likely to move them toward the conversion goal. The decision happens in milliseconds, on every page, every session. The conversion goal stays fixed. What the AI changes is the copy, the offer framing, the proof points, and the form length around that goal.

The quality of that decision depends on three inputs: real-time intent signals instead of stale segment data, a cookieless identity layer that holds after consent rejection, and an autonomous test-and-learn loop that does not require hand-written rules. Tools built on hard-coded triggers, static personas, or cookie-based identity underperform because they lose resolution the moment a visitor refuses consent.

Table of Contents

Visitors don’t behave the way personas predict

McKinsey’s 2025 “Next in Personalization” research found that companies excelling at personalization generate 40% more revenue from those activities than average performers. That number has held across three years of replications. What has changed is the mechanism that delivers it. Personalization built on rules and segments peaked around 2020 and has been losing ground to real-time intent-based systems since.

Three forces drive the shift. Third-party cookie deprecation has broken the identity layer that segment-based tools depended on. Consent rejection rates have climbed past 40% in most European markets. Visitor journeys have fragmented: a B2B buyer in 2026 arrives from ChatGPT after a paid ad after three organic visits after a podcast mention, and their behavior does not fit the cohort they technically belong to. That is why AI in marketing has moved from “who is this visitor” to “what is this visitor trying to do right now”, and why the distance between useful website personalization and expensive theater keeps widening.

What AI content decisioning actually decides

AI content decisioning is the real-time selection of which content variant to serve a visitor, not the generation of new content on the fly. The tools, signals, and failure modes behind each are different.

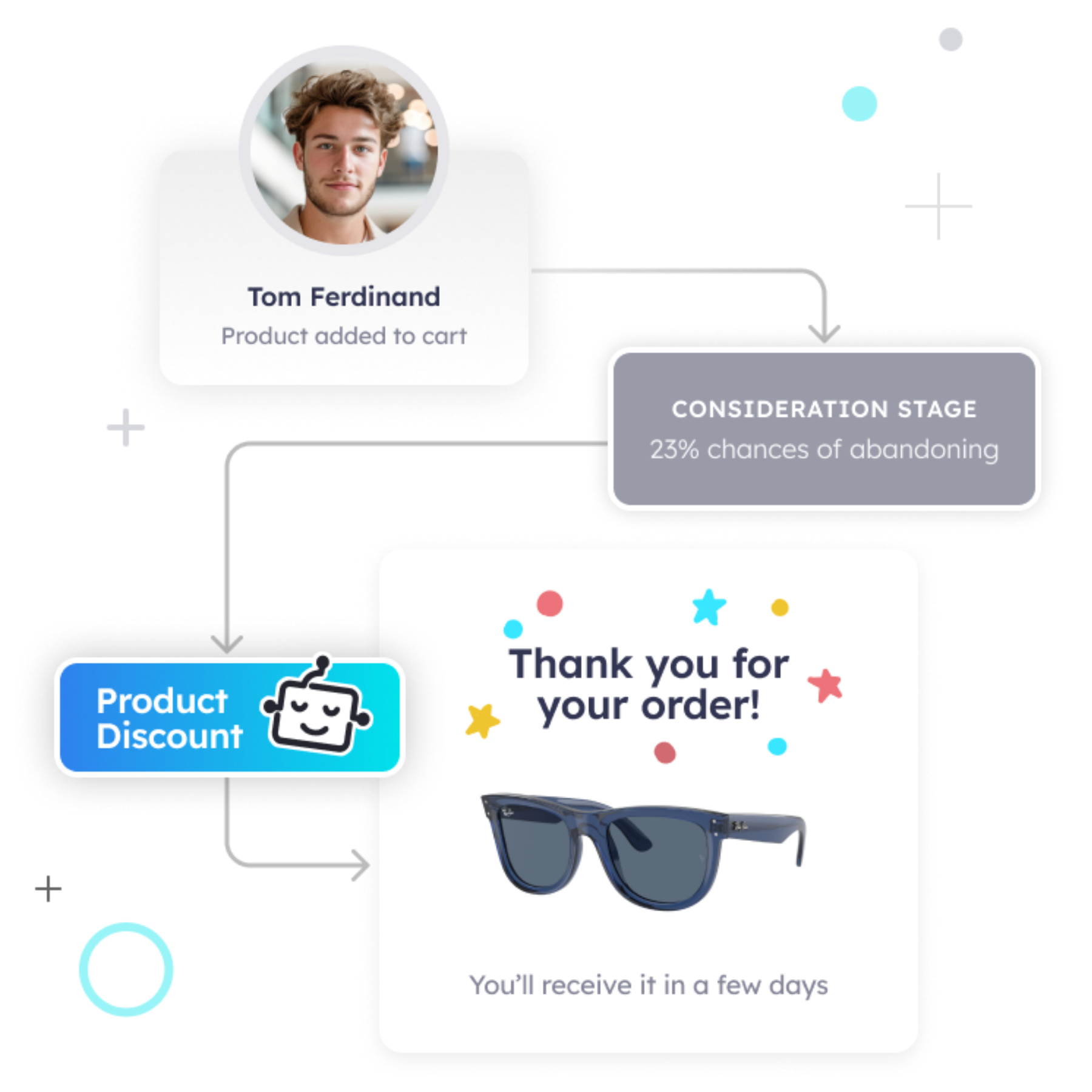

Three categories of AI act on website content. Generative AI writes new copy from a prompt. Recommendation engines suggest items from a catalog using similarity or co-purchase signals. Decisioning engines pick between pre-built content variants (copy, offer, proof, CTA framing) based on predicted intent. Only decisioning engines adapt the on-page experience for a specific visitor in their current session.

A decisioning engine answers one question continuously: given what this visitor has done in the last 30 to 180 seconds, which pre-built variant has the highest conversion probability right now. Not what copy is objectively better. Not what the aggregate A/B winner is. What works for this visitor state. That framing is closer to one-to-one marketing and the original promise of website personalization with AI than to traditional CRO.

Increase +180% conversions from your website with AI

Get more conversions from your existing traffic by delivering personalized experiences in real time.

- Adapt your website to each visitor’s intent automatically

- Increase conversions without redesigns or dev work

- Turn anonymous traffic into revenue at scale

The Intent-Content Match framework

Every AI content decision reduces to a three-step loop: read the visitor’s signals, classify their buying-journey stage, match a content variant with a track record of converting that stage. The framework is simple to state and difficult to implement, which is why most tools that claim to do it do a weaker version.

- Step one is signal capture. The system ingests behavioral data in real time: source, referrer, entry page, pages visited in order, scroll depth, time on page, click sequence, device class, geography at city level, session recency, and return-visit pattern. Good systems capture 200-plus signals per session without writing them to a third-party cookie.

- Step two is stage classification. The AI maps the signal pattern to a point on the buying journey. A visitor who arrived from a long-tail educational query and is reading a how-to post sits in the awareness stage. A visitor comparing feature pages after two prior sessions sits in the consideration stage. A visitor who hit the pricing page twice and watched the demo video sits in the decision stage. Stage classification is where pure rule-based tools collapse: rules can encode five or ten patterns, intent classifiers encode thousands.

- Step three is variant selection. Each stage has a library of content variants, each with a tracked conversion history on that page in that stage. The AI picks the variant with the best expected conversion probability for the current visitor state. The CTA destination and the primary conversion action stay fixed. What changes is the copy, the offer framing, the social proof, the form fields, and the order of information.

If the tool cannot do all three steps continuously, it is not doing intent-content matching. It is doing rule execution with a better UI.

The three generations of AI content personalization

Most tools sold as “AI personalization” are still in the second generation, which depended on cohort segmentation that cookies made possible and that cookies no longer make reliable. Knowing which generation a tool belongs to predicts how well it will work on your site in 2026.

- First-generation personalization is rule-based. Someone writes an if-then rule: if visitor is from France, show the French banner. If session duration exceeds 60 seconds, trigger the exit popup. Most popup builders and basic landing-page tools sit here. Rules are fast to set up and easy to debug. They scale linearly with human effort, cannot classify intent beyond what the rule explicitly encodes, and leave most of the traffic on a default experience because no rule matched. This is the category that makes exit-intent popups increasingly outdated: the trigger fires on an event, not on a state.

- Second-generation personalization is cohort-based. The system groups visitors into pre-defined segments (persona, firmographic, account tier) and serves fixed content per segment. Tools like Mutiny and Dynamic Yield built their core around this pattern. The cohort usually comes from a reverse-IP lookup tied to a third-party cookie or a data-provider match. This generation produced the first real wins at scale and still works for narrow account-based plays with a well-documented ICP. Its structural weaknesses are three: humans define the cohorts and miss patterns they did not imagine, content per cohort is static until someone updates it, and the identity layer depended on cookies that no longer reach most users in privacy-regulated markets.

- Third-generation personalization is real-time intent-based. No human defines segments. The AI classifies each visitor continuously, pulls from a variant library, and self-optimizes from conversion feedback. With 500 visitors per page and a dozen content variants, a classifier beats hand-written rules on any realistic metric. This is where agentic AI is reshaping CRO and where intent data platforms outperform traditional lead-generation tools on pipeline quality. Second-generation tools still dominate buyer lists because they were first to market and demo impressively on a single account-matched visit; their failure modes (stale segments, cookie-dependent identity) stay invisible until the tool has been running for six months on degraded data.

The signals that make real-time decisions possible

A system that claims to do real-time intent classification but only reads page URL and referrer is not doing real-time intent classification. It is doing traffic-source routing. The depth and freshness of signals is where tool capability actually varies.

Strong behavioral input sets include:

- Source and referrer fidelity (organic, paid, direct, AI chatbot referrer)

- Entry page and navigation sequence

- Dwell time per page and scroll depth per page

- Click pattern and interaction events (form focus, form abandon, pricing hover)

- Session recency and return-visit count without third-party cookies

- Device class, viewport, and geography at city level

- Content category sequence (education, then comparison, then pricing, versus direct-to-pricing)

Signals a decisioning engine should not need: third-party cookies for identity, personally identifiable information, data-provider enrichment, manually defined segments, or CRM match.

Every signal in the first list degrades gracefully and is available under GDPR and CCPA without consent for strictly necessary processing. Every signal on the second list breaks when cookies are rejected or the provider’s data is stale. That is why intent data captured on your own site outperforms intent data bought from a third party, and why proper customer behavior data analysis feeds back into model training.

Why cookieless infrastructure is not optional in 2026

Any AI personalization tool that still depends on third-party cookies to identify visitors across sessions is operating on a shrinking base of addressable traffic. The infrastructure question is a gating criterion for tool evaluation, not a nice-to-have.

Safari has blocked third-party cookies since 2020. Firefox blocks them by default. Chrome has been phasing them out since 2024 and is still advancing through 2026. Consent rejection rates in the European Union now sit between 35% and 50%. Combined, the addressable base for cookie-based personalization in most European markets is below 40% of total traffic. The cookieless future’s impact on buying journeys and real-time personalization in a cookieless environment both show what breaks when that base erodes.

What replaces cookies for identity is fingerprint-based deterministic identification, built from client-side signals that do not require cross-site tracking. Well-designed fingerprint systems re-identify a returning visitor across sessions without storing identifiable personal data, which is why they work under GDPR without consent. Tools that cannot hold identity without third-party cookies lose two capabilities at once: they cannot recognize returning visitors (which degrades segment assignments) and they cannot attribute conversions to prior sessions (which degrades the optimization signal). Both failures compound.

Where most AI personalization tools fall short

The vendor landscape for “AI website personalization” is crowded, and most tools are first- or second-generation systems with AI-branded marketing. Evaluating a tool requires looking past the demo and asking precise questions.

- Rule-based tools (popup builders, exit-intent plugins, basic landing-page tools) trigger on specific events. They detect exit intent and time-on-page thresholds. They cannot classify buying-journey stage, cannot adapt content to signal depth, and cannot self-optimize without a human writing new rules. The content decision is always the one the human wrote.

- Cohort-based platforms (Mutiny, Dynamic Yield, Intellimize) use segments. Segments typically derive from account identification (reverse IP, data-provider match) and serve fixed content per tier or industry. Strong for narrow account-based plays. Weak in two places: the content per segment is hand-configured, and the identity layer usually still depends on cookies or ad-tech data, so traffic without firmographic enrichment falls through to default.

- Generative AI content tools (Jasper, Copy.ai, ChatGPT itself) produce new content from prompts. They do not decide when or to whom to show it. Using generative AI to personalize in-session requires a decisioning layer on top, and most teams underestimate what that layer needs to do.

- Analytics and session-replay tools (Hotjar, FullStory, Microsoft Clarity) report on behavior. They do not act on it. Valuable as diagnostic inputs to a decisioning system, not a substitute for one.

The honest capability table looks like this:

Capability | Rule-based | Cohort-based | Real-time intent |

Detects stage of buying journey | No | Partial | Yes |

Works without third-party cookies | Partial | No | Yes |

Adapts content without human rule edits | No | No | Yes |

Optimizes autonomously | No | Partial | Yes |

Scales past 20 variants | No | Partial | Yes |

GDPR-compliant without consent banner | Depends | No | Yes |

The negative framing follows from how each architecture was built. Cohort-based systems were designed around cookies because cookies were the best identity layer in 2018, and that decision now limits them. A lot of the churn in CRO software purchases in 2025 and 2026 came from teams realizing their cohort-based tool lost half its addressable base without anyone on the vendor side flagging it, while low conversion rates on high-intent traffic persisted for the rest.

How to measure whether the AI is actually deciding well

Aggregate conversion rate lift is the wrong first metric for a content decisioning engine, because it confounds intent classification accuracy with variant quality. Better teams measure both separately.

- Stage-segmented conversion rate. Lift per classified journey stage. Good classifiers produce differentiated lift (biggest gain in decision stage). Flat lift across stages usually means the classifier is not differentiating.

- Time to classification. How many signals the AI needs before committing to a stage. Faster classifiers serve the right variant earlier, which compounds when visitors average 90 seconds on site.

- False-variant cost. When the AI picks a misaligned variant, conversion drops below default. Incidence requires a persistent control group to measure.

- Lift durability. Month-over-month conversion rate in the treated group. Second-generation tools often show strong initial lift that decays as segments go stale. Third-generation tools show rising lift as the model accumulates conversion data. Whether a conversion rate benchmark is being met matters less than whether treated-group lift is trending up or down.

A 5% control group is the minimum infrastructure to measure any of this. Tools that do not preserve a control group cannot be evaluated rigorously; they are running on faith.

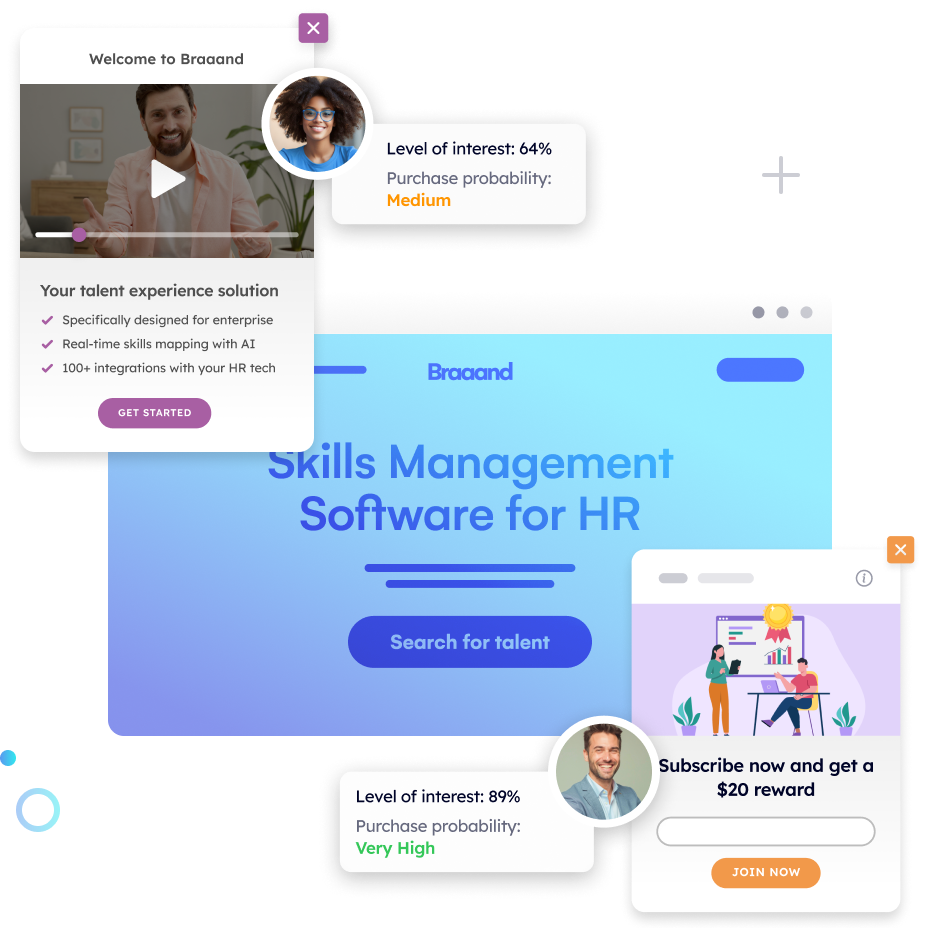

How Pathmonk decides which content to show each visitor in real time

Pathmonk is an AI-powered website conversion platform built around the third-generation pattern: cookieless identity, autonomous intent classification, self-optimizing variant selection. The mechanism maps onto the Intent-Content Match framework and is designed to work on the same traffic cohort-based tools lose to consent rejection and cookie deprecation.

- Identity runs on a cookieless fingerprint-based deterministic ID. Pathmonk’s fingerprint technology recognizes returning visitors across sessions and devices without third-party cookies and without storing personal data, which keeps the product GDPR-compliant without a consent banner and keeps the identity layer stable as Chrome’s cookie deprecation advances. That layer extends to B2B company detection for account-aware use cases without breaking the base decisioning flow.

- Stage classification runs continuously on every visitor. The AI reads 200-plus behavioral signals per session and maps each visitor to one of three journey stages: awareness, consideration, or decision. The classifier does not rely on hand-defined personas; it learns stage patterns from actual conversion behavior on the site it runs on. The site owner can guide the system further by training Pathmonk’s CRO agent with labeled pages, goal weighting, and filters.

- Variant selection happens through microexperiences. A microexperience is a contextual piece of supporting content (social proof panel, case-study prompt, product-fit quiz, price-match badge, demo-booking flow) that sits alongside the existing page. The AI picks the microexperience most likely to convert the current visitor at their current stage. The primary conversion goal and CTA stay constant. What the AI changes is the supporting content surrounding the CTA, which is the operational difference between intent-matched personalization and the generic segment-based treatment that leaves visitors not ready to convert without a relevant next step.

- Optimization is autonomous but human-gated on scale. New customers start on a 50/50 A/B split against a baseline. The customer reviews the test data and, once confident, manually scales up to 95% traffic exposure on the winning configuration. A 5% control group is always preserved for ongoing measurement. The AI continues testing variant combinations and incorporating new conversion data, which is why results tend to improve across the first quarter rather than peak on day one.

At 10,000-plus pageviews per month (the minimum for the model to learn faster than defaults), this architecture typically produces double-digit conversion lift within weeks. On higher-traffic sites, triple-digit lifts are common on decision-stage pages. The AI-meets-CRO logic is the same logic that makes AI-powered personalization work downstream on LTV: the better the in-session decision, the more qualified the resulting conversion.

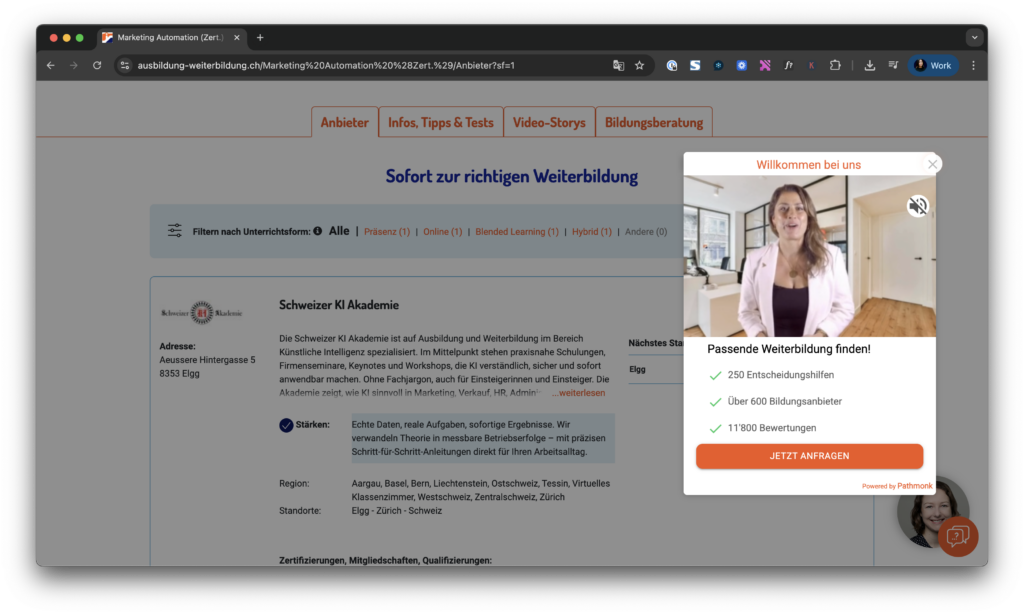

How Ausbildung-Weiterbildung lifted leads by matching content to visitor intent

Ausbildung-Weiterbildung is a Switzerland-based career and vocational training platform operating in a high-competition vertical with large visitor volume spanning the full range of educational decision-making, from early research to direct enrollment inquiries.

Diagnosis:

- Healthy traffic volume with genuine interest signals across the full intent spectrum

- Lead generation running on standard content and form gating, producing conversion rates that lagged the volume of engaged sessions

- Visitors arriving from active decision-stage search queries receiving the same experience as early-stage browsers

- No differentiation between a first-time visitor scanning program lists and a returning visitor on their third visit to a specific enrollment page

The bottleneck was not traffic or forms; it was serving one content track to three different intent states.

Pathmonk classified visitor intent in real time and served decision-stage visitors enrollment prompts, qualification flows, and targeted case studies, while continuing to educate awareness-stage visitors with program overviews and orientation content. Existing site assets were repurposed into the microexperience variant library with no new content production required.

Results:

- +87% leads in the first week versus the pre-Pathmonk baseline

- Higher-quality enrollment inquiries with no additional paid media spend

- Durable lift across subsequent weeks as the classifier accumulated site-specific conversion data

The win came from separating visitors by stage and letting the AI pick the matching content variant at session time.

Calculate your increased profit in just a few clicks

Easily find out how many conversions and revenue you can expect once Pathmonk is installed on your website.

Calculate your gains

FAQs on AI-powered content personalization

How is AI content decisioning different from a recommendation engine?

A recommendation engine surfaces items from a catalog using similarity or co-purchase signals. It answers what a visitor should look at next. A content decisioning engine picks between pre-built content variants (copy, offer, proof, CTA framing) based on predicted intent. It answers which version of a given page this visitor should see. The two run on different signals and optimize for different outcomes.

Does AI content personalization still work without third-party cookies?

Yes, if the system uses a cookieless identity layer. Fingerprint-based deterministic identification captures session continuity without third-party cookies or personal data. Tools that still require third-party cookies for identity lose 40% or more of European traffic under current consent rates, plus most of Safari and Firefox globally.

How much traffic do you need for AI content personalization to work?

The operational minimum sits around 10,000 pageviews per month per domain. Below that, the classifier does not accumulate conversion signal fast enough to beat a well-designed default. Above it, lift grows with volume because the model has more examples per variant.

What’s the difference between segment-based and intent-based personalization?

Segment-based personalization assigns visitors to pre-defined buckets and serves fixed content per bucket. Intent-based personalization classifies each visitor’s current journey stage from live behavior and selects a variant based on that stage. Segments are static and human-written; intent is dynamic and learned from conversion data. This is why hyper-personalization is converging on intent rather than wider segmentation.

Can generative AI replace a content decisioning engine?

No. Generative AI writes new content from prompts. A decisioning engine selects which content to serve. Using generative AI to produce the variants a decisioning engine then chooses from is reasonable. Using it without a decisioning layer gives you new content served uniformly.

How do you measure whether the AI is making good decisions?

Four metrics: stage-segmented conversion rate, time-to-classification, false-variant incidence, and lift durability. A 5% persistent control group is required to measure any of them rigorously. Aggregate lift alone misleads because it confounds classifier accuracy with variant quality.

What happens when the AI picks the wrong content variant?

Conversion on that visitor drops below the default baseline, measurable through the control group. Good systems minimize false-variant incidence by holding more signal before committing to a stage and by weighting selection toward variants with the strongest conversion history for the current visitor state. Best practices for creating high-converting microexperiences reduce that rate further.

Does AI content personalization work for B2B with long sales cycles?

Yes. The conversion event is usually a demo request or qualified form submission, and the B2B buying journey spans multiple sessions. Cookieless identity is especially valuable for B2B because it preserves session-to-session recognition where third-party cookies rarely reach. Techniques like predictive lead scoring, predictive analytics, and AI for lead generation sit downstream of the in-session decisioning layer.

How is this different from using micro-moments on a site?

Micro-moments are the behavioral anchors a classifier reads. AI content decisioning is the loop that turns those anchors into a served variant. Micro-moments without a decisioning layer are insight without action. Decisioning without micro-moments is action without signal.

Should the AI change the CTA for different visitors?

No, not for the primary conversion goal. Changing the CTA fragments the conversion event and breaks downstream measurement. The AI should change the supporting content around the CTA while keeping the CTA and conversion action consistent. This is the operational rule behind stable customer journey orchestration.

Key takeaways

- AI content decisioning selects between pre-built content variants based on predicted buying-journey stage. It is not the same thing as generative AI or recommendation engines.

- The Intent-Content Match framework reduces the decision to three steps: read the signals, classify the stage, match a variant with a conversion track record for that stage.

- Three generations of personalization exist. Most tools sold as AI are still rule-based or cohort-based. Real-time intent-based systems are the only architecture that adapts continuously without human rule writing.

- Cookieless infrastructure is gating. Tools that depend on third-party cookies for identity work on less than half of addressable traffic in privacy-regulated markets.

- The CTA and conversion goal should stay constant. What the AI changes is the supporting content around the goal.

- Rigorous measurement requires a preserved 5% control group and stage-segmented conversion metrics.

- Pathmonk’s architecture (cookieless fingerprint + real-time stage classifier + microexperience variant library + human-gated scale-up) is a working instance of the third-generation pattern. Results like Ausbildung-Weiterbildung’s +87% leads in the first week come from content-to-intent matching on existing traffic.